Many teams still depend on code written years ago, often by developers who have already left the company. These systems still run daily operations, but the code is hard to follow, risky to change, and rarely documented well.

The scale of the issue is hard to ignore. For example, 95% of ATM transactions still rely on COBOL, a language created in the 1950s. This dependency drives up maintenance costs, increases security exposure, and slows down progress.

At the same time, teams are expected to ship new features, fix issues, and keep things moving. That’s where problems start to pop up. However, refactoring gives you a way forward.

In this guide, you’ll learn how to refactor legacy code to lower risk, make systems easier to maintain, and extend the value of what you already have.

What is legacy code refactoring?

Legacy code refactoring is the process of improving and restructuring existing code without changing its behavior. The goal is to make the code clearer, easier to understand, and simpler to maintain, while also reducing risks such as security vulnerabilities and rising maintenance costs.

Instead of replacing the entire system, refactoring allows teams to modernize legacy applications step by step. This approach improves performance, reliability, and flexibility over time, without the disruption and risk that come with a full rewrite.

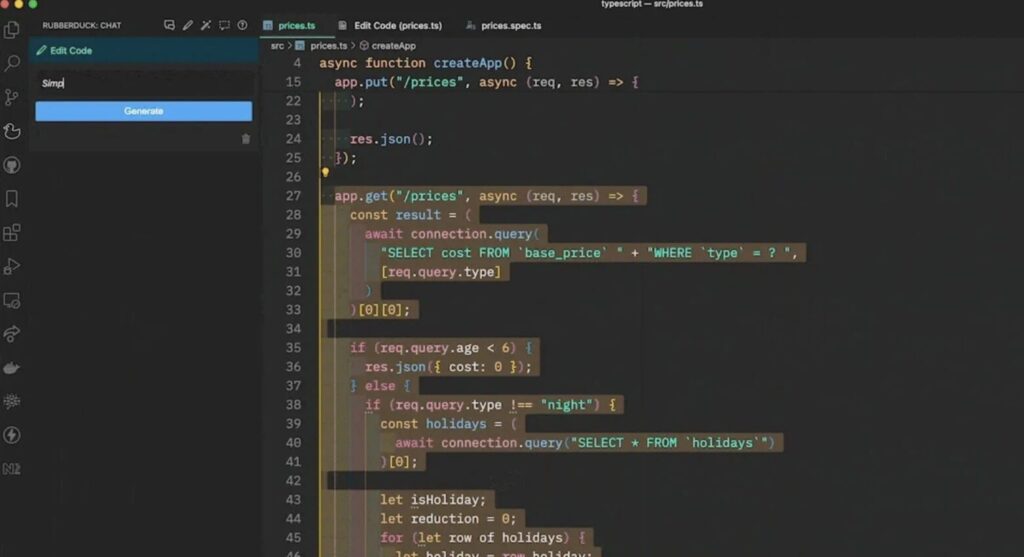

AI Refactoring Capabilities

| Variable renaming across scope AI tools track how variables are used across files and update them consistently, without breaking dependencies or hidden references. |

| Function extraction and decomposition Complex functions are split into smaller, easier-to-manage parts, while preserving correct parameter handling and return values. |

| Dead code elimination Unused imports, unreachable code, and outdated function calls are identified and removed without affecting active logic. |

| Documentation generation AI reviews how functions behave and adds clear comments, docstrings, and inline explanations where they’re missing. |

| Code style consistency Formatting, naming, and structure are aligned across the codebase, so teams work with a consistent and predictable standard. |

Best practices for AI refactoring legacy code

Below are practical best practices for refactoring legacy code that you can apply in real workflows. They follow the same fundamentals as any solid refactoring approach: reliable testing, clear constraints, disciplined code review, built-in security checks, and controlled, step-by-step deployments.

1. Lock behavior before you refactor legacy code

Legacy refactoring breaks down when you rely on what you think the code does instead of what it actually does. Before AI touches anything important, you need to lock the current behavior in place.

What does this look like?

- Characterization tests

These tests capture how the system behaves today, including edge cases that may look odd but still matter. - Golden files or snapshot checks

Useful for validating complex outputs where small changes are hard to detect manually. - A focused regression harness

Cover the flows that carry real risk, such as billing, authentication, pricing logic, and data transformations.

This step is often overlooked, yet it addresses the main risk of AI refactoring: silent changes in behavior. It also sets a clear standard for reviews. If tests fail, the change must be explained and intentional. Use it as a hard rule: no characterization tests means no AI refactoring in that area.

2. Ship refactors as tiny PRs

AI makes it easy to attempt a large cleanup in one pass. That usually leads to a PR no one fully understands and a regression no one can trace.

Treat refactoring with the same care as surgery.

- One PR equals one clear intent

Each change should solve a single, well-defined problem. - Keep diffs narrow

Limit changes to a function, a file, or one module boundary. - Ship progress in steps

Deliver a sequence of small improvements instead of aiming for one complete rewrite.

This approach reduces risk and makes AI-generated changes easier to review.

Reviewers can follow the logic and quickly spot what caused a change in behavior.

Set a simple rule for your team: if a reviewer cannot explain the change in under a minute, the PR is too large.

3. Set limitations

If you want consistent results, don’t ask AI to “clean this up.” Define constraints that protect how the system works.

Examples of limitations you should document and reuse

- Do not change public interfaces such as method signatures or API contracts unless clearly approved.

- Do not modify business logic unless tests are added or updated to prove the change is intentional.

- Do not touch authentication, cryptography, or payments without review from the responsible owner.

- Do not remove code marked as unused until you confirm usage through logs, tracing, consumers, or cross-repo search.

- Do not rewrite SQL or data logic unless outputs remain identical and performance impact is validated.

These constraints act as guardrails. They stop AI from making changes that look correct but break hidden dependencies. In practice, they become your internal AI refactoring policy, especially useful when documentation is incomplete and risk is high. At this stage, the main risk is not speed. It is preserving the structure of the system.

Refactoring legacy code with AI requires clear architecture, visibility into dependencies, reliable regression testing, and controlled rollouts. If your team cannot support that level of control, a structured modernization approach is safer.

Instead of relying only on automated changes, combine AI analysis with engineering oversight, risk assessment, and staged migration. This reduces the chance of hidden regressions and long-term instability.

4. Package the context

The quality of AI refactoring depends heavily on the quality of the context you provide. A model cannot respect boundaries it does not know are there.

What to include in your prompt or context package?

- The goal

State what you want the refactor to achieve, such as improving readability, reducing duplication, or simplifying branching. - The boundaries

Make it clear what must stay unchanged, including behavior, outputs, and public interfaces. - The shape of the correct output

Provide sample inputs and outputs, or reference logs that show what correct behavior looks like. - Relevant surrounding code

Include snippets or links from adjacent modules and interfaces the code depends on. - Project rules

Add linter requirements, naming conventions, and error-handling patterns the refactor should follow.

You should also keep sensitive data out of prompts.

Do not paste corporate data, tokens, or personal info. If some context matters but cannot be shared safely, summarize it instead. For example, say that a method validates a user session and returns a 401 on failure, rather than including the real code or live data.

This is one of the most practical ways to improve AI refactoring outcomes because it reduces false assumptions and makes the output more predictable to review.

5. Make domain review non-optional

AI can produce code that looks clean and correct, yet still miss subtle issues. That’s why human review is not optional. It is a required step. Even beyond refactoring, there is a clear trust gap:

- Stack Overflow reports that 76% of developers use or plan to use AI tools, but only 43% trust their accuracy.

- SonarSource found that many developers question AI output, yet do not verify it consistently.

What “mandatory” should mean in practice

- A reviewer with domain ownership signs off

Not just any developer, but someone who understands how the system is supposed to behave. - Reviews focus on invariants

Check outputs, edge cases, error handling, and backward compatibility. - Behavior changes must be explicit

If something changes, tests must prove it, and the PR must explain why.

This step protects you from the most expensive type of bugs, the ones that pass silently and surface later.

6. Run security gates independently

A clean refactor can still introduce security regressions, even when the code looks correct on review. AI support makes this risk more subtle by increasing developer confidence without guaranteeing secure output.

Research highlights this gap. A study by Perry et al. found that developers using AI assistants were more likely to produce insecure code while also believing it was secure. Separate studies on Copilot-style tools show that vulnerable suggestions remain present, even as models improve, with some experiments reporting rates such as 27.25% in controlled environments.

This means that AI review, combined with human review, is not sufficient as a security layer. Security checks must run independently and without exception.

In practice, every refactor should pass through the same baseline gates: dependency scanning and SBOM checks, secrets detection, SAST analysis, and policy checks for authentication-related changes. In more sensitive systems, teams also add targeted rules based on their stack, such as injection pattern detection.

This approach works because it removes judgment from the process. Security checks run every time, regardless of who wrote the code or how confident the team feels about it.

7. Refactor only with rollback

Legacy refactoring is not just about improving code quality. It is a form of production risk management. A rollback plan is what makes small changes safe and prevents a clean refactor from turning into a production incident.

That plan needs to be practical and ready to use, not something written down and forgotten.

- Use a release strategy that supports rollback

Feature flags, canary releases, or blue-green deployments should already be in place before changes go live. - Define clear stop conditions

Know which metrics will trigger a rollback, whether it is error rates, latency, or failed transactions. - Rehearse the process

Your team should know who initiates the rollback, how quickly it happens, and what gets communicated.

Many teams believe they have this covered until they actually need it. If rollback is slow or unclear, refactoring becomes risky by default.

If you want to put these guardrails in place without slowing delivery, start with a short setup phase. Spend one to two weeks establishing characterization tests for critical flows, defining PR constraints, adding automated security checks, and setting up a release process that supports fast rollback.

Final Thoughts

AI can support legacy code refactoring, but only when it operates inside a clear and controlled process.

Teams that see real results do not rely on intuition. They rely on defined contracts through tests, disciplined workflows with explicit constraints, domain review, independent security checks, and releases that can be rolled back safely.

When these practices are in place, teams spend less time fixing issues after refactoring and more time improving the system in a controlled way. If your team has already used AI heavily and is now dealing with inconsistent patterns, fragile modules, or missing documentation, it makes sense to stabilize before moving further.

A structured cleanup approach can help you review AI-generated code, fix weak areas, restore test coverage, and reintroduce engineering guardrails.

Instead of continuing to build on unstable ground, this step helps turn early AI-driven changes into reliable, production-ready systems.

What once supported the business now dictates what’s possible? Legacy application modernization is about regaining control. Get in touch to learn more.