Artificial intelligence is no longer a niche field reserved for researchers in lab coats. It’s already being baked into everyday products and business workflows. Over 66% of people now use AI on a regular basis. In business, 78% of organisations have adopted AI in some form, up sharply from 55% just a year earlier (Stanford HAI, 2025). And the next generation is already all-in: 92% of students now use generative AI, with nearly one in five say they’ve turned in AI-generated content as part of their assignments.

The market is responding in kind. The global AI industry, including tools, platforms, and services, is now valued at over $500 billion, representing a 31% year-over-year increase, with projections reaching $1 trillion by 2031.

However, at the same time, 40% of jobs are exposed to the impact of AI. So, if you want to avoid being left behind, it’s high time to get hands-on. The good news? You don’t need a PhD, you just need a clear path forward to start exploring AI and building practical skills you can apply at work. There’s a sea of frameworks, machine learning models, APIs, cloud platforms, and community tools to explore. The landscape changes every few months. And it’s easy to get lost chasing what’s shiny instead of what’s useful.

Here’s a step-by-step path to get started with artificial intelligence in a way that’s grounded, efficient, and scalable.

Start with ChatGPT: Learn How to talk to the model

Before you jump into code or APIs, start with the basics: learning how large language models respond to human prompts.

Open ChatGPT (or any similar chatbot powered by GPT-4) and start experimenting. Write prompts and rewrite them, changing the tone. Ask the same question three different ways and see how the output changes.

It may seem simple at first, but learning how to write effective prompts is a must-have skill. Prompt engineering goes beyond clever phrasing. It requires a solid grasp of how the model processes instructions, manages limitations, and interprets context. The way you frame your input has a direct impact on the quality and consistency of the output.

There’s already a growing body of practical guidance on this, from role-based prompts to few-shot examples and structured task instructions. But you don’t need to study it all upfront. The best way to learn is to experiment. Try different approaches, adjust the wording, and observe how the model responds. Over time, you’ll develop a feel for what works and why. That intuition becomes incredibly valuable later on, especially when you start integrating AI into real workflows and applications.

Make simple integrations: Learn the fundamentals through the OpenAI API

Once you’ve built a solid feel for how prompting works, it’s time to shift gears, from interacting with the model manually to integrating it into actual software. This is where you stop experimenting in a chat window and start building something great.

OpenAI’s API is one of the most accessible ways to begin. Start with a basic Python script: send a prompt to GPT-4, capture the response, and display it. From there, start by layering in tools. Use the chat/completions endpoint to structure conversations, create simple assistants, or automate decision-making tasks. Explore the embeddings endpoint to convert text into vectors, an important step for building search engines, recommendation tools, or any system that needs to understand meaning at scale.

Such hands-on experimentation is where things start to feel real. You’ll begin to understand what models are available, how they differ in capabilities and cost (GPT-3.5 vs GPT-4, for example), and how token pricing and rate limits shape design decisions. You'll get a sense of how the API behaves in production-like environments, where speed, reliability, and cost efficiency matter.

So, now you’re building a foundation for everything that comes next: embeddings, search, memory, fine-tuning, and more. This is where AI stops being abstract and starts becoming a tool you can actually use.

Explore third-party frameworks in artificial intelligence: Start with LangChain

Once you're comfortable working directly with the OpenAI API, you'll start to notice some patterns and friction. You’ll write similar logic over and over again: sending prompts, parsing responses, resolving errors, managing context. That’s your cue to start exploring third-party frameworks that simplify and scale your work.

A great place to begin is LangChain, an open-source framework for building applications powered by large language models. It abstracts away a lot of boilerplate and introduces core building blocks like:

Chains

Sequences of operations where the output of one step feeds into the next

Memory

Giving your application the ability to remember previous interactions

Tools

Letting the AI interact with external functions, APIs, or data sources

Try building something simple but practical, for example, a document Q&A tool that reads a PDF, summarises it, and answers follow-up questions. It’s a great exercise in connecting multiple components: text input, embeddings, retrieval, and generation.

LangChain and frameworks like it aren’t just shortcuts. They help you think more modularly, design workflows more inclusive and accessible, and build apps that go beyond one-off prompts. Even if you decide to move to a different framework later, understanding how LangChain structures AI-powered logic is time well spent.

Build a RAG system: Must-have for AI careers

Once you've explored basic workflows with LangChain, it’s time to take a bigger step: building an AI agent that can work well with your own data. This is where Retrieval-Augmented Generation (RAG) may be helpful: a powerful pattern that allows the model to access external knowledge dynamically, rather than relying only on what it was trained on.

At this stage, you’ll start working with LangGraph, a framework for managing complex interactions. Together with LangChain, it gives you the structure needed to build more autonomous, multi-step agents.

To get started, you'll need to understand the core components of a RAG system:

Break down your documents into chunks

Convert them into embeddings using a model like text-embedding-3-small

Store them in a vector database (such as FAISS, Pinecone, or Chroma)

Retrieve the most relevant content based on user queries

Feed that retrieved context back into the LLM for a grounded response

This architecture unlocks powerful use cases, from internal knowledge bots to customer support assistants and domain-specific Q&A systems. You’re no longer limited to what the model “knows.”

Instead, you’re giving it real-time access to the information it needs.

Along the way, you’ll get hands-on with vector search, document indexing, prompt orchestration, and multi-turn reasoning. It’s your first real step into building AI agents that can reason, retrieve, and adapt, bringing you closer to production-grade systems.

Try fine-tuning with OpenAI’s API

By now, you’ve used prompts, integrated APIs, and even retrieved external knowledge. However, sometimes you’ll want more control, especially if the model’s responses are too generic or inconsistent.

So, from here, fine-tuning starts to make sense.

OpenAI makes this relatively simple: you upload examples of input-output pairs (training data), and the API returns a custom model tailored to your use case. It works well for:

Product-specific terminology

Tone adaptation

Repetitive or structured tasks like classification

Keep in mind that fine-tuning is not always the best answer. But when used right, it can reduce prompt complexity and improve reliability.

Explore local models: Start with LLaMA

Once you’re confident with cloud APIs, it’s time to explore what’s happening in the open-source community. Meta’s LlaMA models are a good entry point.

Unlike OpenAI’s hosted models, LLaMA runs locally on your machine, in a container, or on a server you control. Tools like Ollama or LM Studio can help you get started without deep machine learning knowledge.

Why bother with local models?

You get full control over the inference process

You can run them offline or in secure environments

You can experiment without worrying about API costs

It’s also where you’ll start seeing the low-level details, tokenisation, quantisation, context windows, and appreciate how much work cloud APIs are abstracting away.

Pick up a direction for your career path

Once you’ve worked with prompts, APIs, embeddings, and maybe even built your first AI agent, you’ll start to get a feel for what part of this domain actually interests you. That’s when it makes sense to focus.

Maybe you’re into infrastructure and want to go deeper into deploying and optimising models. Maybe you enjoy building product features and want to master a framework like LangChain or Semantic Kernel. Or maybe you’re looking to scale and want to explore what the big cloud platforms offer.

At this stage, AWS, Azure, and Google Cloud all have their own AI services and tooling. But here’s the thing: it’s easy to get lost in them if you jump in too early. Without a strong foundation, these platforms can feel overwhelming and overly complex.

So take your time. Once you know what you're building and why, you’ll be in a much better position to pick the right tools and go deep. Whether that’s a cloud service, an open-source stack, or a specific product area, this is where you start to focus and build real expertise.

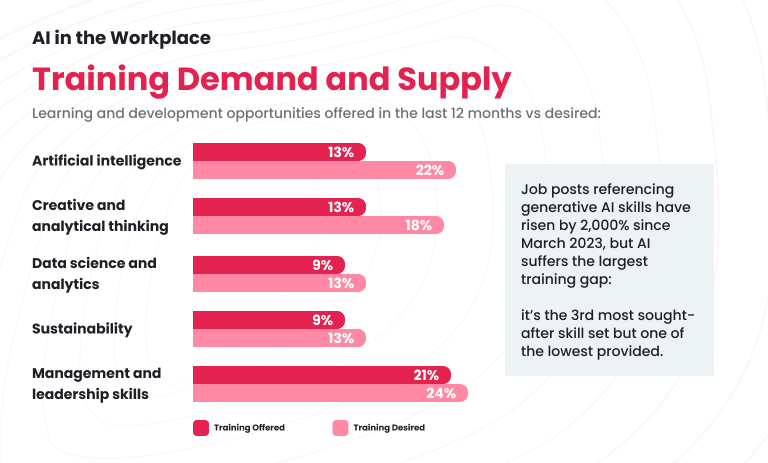

And this isn’t just about individual growth. Across industries, teams are increasingly expected to work alongside AI, whether in marketing, operations, engineering, or support. As AI becomes more embedded in our workflows, more employees are asking for practical training, not just tools. They want to understand how AI fits into their roles and how to use it effectively.

Go wide: Hugging Face and the Open-Source Ecosystem

Now it’s time to zoom out and see the bigger picture.

One of the best places to do that is Hugging Face. Think of it as the GitHub of modern AI technologies. It’s where researchers, developers, and companies publish their work, share tools, and collaborate on advanced projects. You’ll find:

Thousands of models across text, vision, audio, and multi-modal use cases

Public datasets for training and experimentation

Libraries like Transformers, Diffusers, PEFT, and Evaluate

Leaderboards, benchmarks, and constantly updated community projects

Literally, this is where most open-source “magic” in AI happens, often months before it’s wrapped into commercial platforms. Want to experiment with models beyond OpenAI? Check out Mistral, Mixtral, Falcon, Claude, or even open versions of LLaMA. Curious about multi-modal AI? Some models can process both images and text side-by-side.

You don’t need to master it all. The ecosystem grows really quickly. But this is where you keep your edge by staying curious, trying new tools, and seeing where the field is headed before it becomes mainstream.

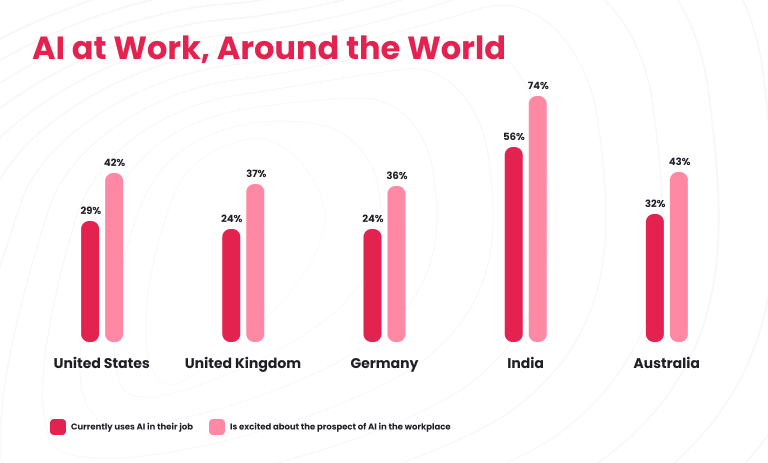

Map out your career path: From software engineer to AI professional

As you build hands-on experience with real AI applications, you’ll start to see how these skills translate into career opportunities. The demand for AI professionals is growing fast, not just in research labs, but across nearly every industry looking to integrate intelligent systems into their products and services.

Whether you’re coming from a software engineering background, data science, or business intelligence, there’s room to go deeper. You might lean into data engineering, focusing on pipelines, infrastructure, and tooling that power AI models. Or you might go deeper into deep learning and computer vision, developing custom models or fine-tuning existing ones for specific use cases.

The job market is maturing just as quickly as the tech. New roles are emerging that didn’t exist a year ago. For example, job market is on the lookout of prompt engineers, AI product leads, applied AI developers, and more. Companies are actively looking for people who not only understand how to use AI tools, but who can build, adapt, and scale AI applications in production.

Your technical foundation gives you a head start. The next step is to apply it intentionally, to pick a direction that aligns with your interests, gain hands-on experience, and stay flexible.

Stay agile in career paths: Everything will keep changing

One final piece of advice: don’t expect stability. Every few months, something new disrupts the status quo.

Make peace with this. Instead of clinging to one tool or one framework, build habits around:

Reading changelogs and release notes

Experimenting regularly

Refactoring and rethinking as needed

The most valuable skill you can build isn’t mastery of one platform: it’s your ability to adapt, evaluate, and rebuild.

There’s no single “correct” way to break into AI. But there is a smarter way: start small, stay practical, and build hands-on experience as you go.

You don’t need a million-dollar budget or a team of AI engineers. You just need to keep showing up, staying curious, and layering your skills one step at a time. That’s your real path to AI, and it’s wide open.

At the crossroads of technology unknowns, Altamira remains your trusted AI-first technology partner in business transformation. We will carefully create and structure your AI roadmap, help you adopt best practices, manage your data, and develop feature-rich solutions that automate, improve, and facilitate your operations.

Wonder how AI capabilities may empower your business? Join our free AI discovery workshop! Contact us to get more information.