AI has carried two different mindsets since the 1950s. On one side, symbolic AI, which is the rule-based approach that dominated early research. On the other hand, machine learning, the data-driven method that now powers most of the NLP you use every day.

Machine learning takes a simple idea and scales it: let the system learn patterns from examples instead of hard-coding every rule. You feed it data, it builds statistical models, and it keeps adjusting those models as it processes more examples. No one tells it the exact steps. It figures them out from what it sees.

If a human tried to sift through millions of sentences to predict the next word or classify intent, they’d burn out in minutes. A machine learning model doesn’t. It improves with volume. The more patterns it catches, the better its future predictions get.

The transformation from hand-crafted rules to learned patterns is what pushed machine learning to the center of natural language processing (NLP). It simply handles scale and ambiguity in a way symbolic systems never could.

What is symbolic artificial intelligence?

Symbolic AI, often called GOFAI, comes from the early days of the field, back when researchers tried to generate human language the same way people do it: through clear rules and well-defined symbols.

Instead of learning from large datasets, these systems rely on human-written logic. You tell the system what matters, how concepts relate, and what conclusions to draw.

For example, image a medical rule setting: "If the patient has a fever, a cough, and trouble breathing, check for pneumonia.”

A symbolic system follows that chain exactly. You can trace every step of the reasoning, which is why these systems still show up in areas where people need to understand how decisions are made.

The strength of symbolic AI comes from its structure. It breaks knowledge into pieces the same way language breaks ideas into words and sentences. Because of that, it can reason about abstract statements: “all mammals are warm-blooded,” “if X is true, Y must follow”, and apply them consistently. Expert systems and knowledge-based tools were built on this foundation.

And the trade-off is flexibility. Symbolic AI doesn’t adapt on its own. When the real-world conditions change, someone has to update the rules by hand. That makes it less suited for messy data, unpredictable inputs, or unstructured text.

Still, symbolic AI remains useful when transparency matters more than scale. And many modern approaches borrow from it, blending clear reasoning paths with the pattern-finding strengths of machine learning.

The symbolic approach applied to NLP techniques

Symbolic AI has a long history in natural language understanding and processing, especially in early chatbots and rule-driven dialogue systems. The idea is simple: you teach the machine a language the same way people learn it, through grammar, vocabulary, and clear rules.

Computational linguists write those rules by hand, define how words relate, and shape the system’s understanding step by step.

Everything inside a symbolic NLP system is visible. You can open it up and see exactly why it led to that interpretation. That transparency is a strong contrast to machine-learning models, where the reasoning often sits inside a “black box.”

So, instead of supplying thousands of example sentences and hoping the model generalizes, we expand the knowledge base directly. When the system makes a mistake, we can see why, make a targeted fix, and move on.

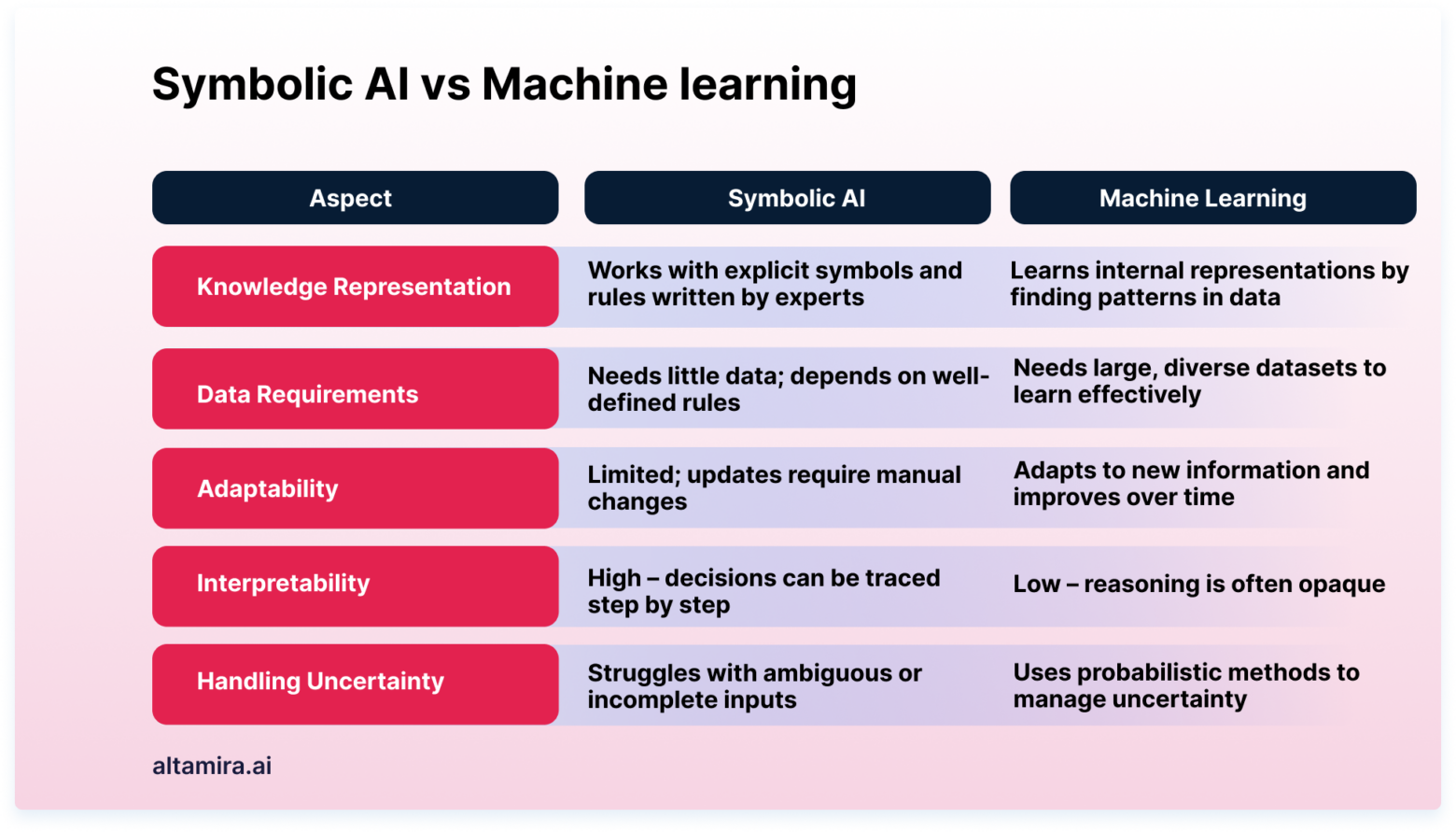

At its core, the difference between symbolic reasoning and machine learning comes down to how the system learns. Machine learning discovers patterns statistically. Symbolic AI follows rules written by humans.

Machine learning can handle huge amounts of data, but it comes with trade-offs: limited transparency and the need for large, well-labeled datasets.

What is machine learning?

Machine learning changes the way computers solve problems. Instead of following a long list of instructions, these systems learn from examples. You give them data, they find patterns, and they use those patterns to make decisions.

At a basic level, machine learning is pattern recognition at scale. It handles tasks that would break traditional, rule-based systems: things like scanning financial transactions for fraud or picking out objects in images. That’s why it shows up in everyday tools: recommendation engines, speech recognition, and copilots.

The strength of machine learning is that it improves with experience. The more data it processes, the sharper its predictions become. A model that has reviewed thousands of medical images, for example, tends to catch subtle details that a rule-based system would miss. This ongoing refinement is why machine learning works well in fields that deal with complexity and variation.

You see it across industries: spotting tumors in scans, flagging unusual credit card activity, helping students learn languages, or powering search and navigation.

Still, not every problem needs machine learning. As one founder put it, he’s seen companies reach for ML when a simple deterministic rule-based system would have done the job. It’s a reminder that the value comes from choosing the right approach, not the most complicated one.

Machine learning in natural language processing (NLP)

Machine learning plays an important role in NLP, especially in traditional chatbot systems. The setup is familiar: the person managing the bot feeds the model large amounts of example phrases. The model studies those examples and learns to match user questions to an underlying intent.

If a user asks “show me today’s news” or “what’s the news today?”, the system should recognize both as the same intent. In practice, the model only gets there after reviewing many variations. The engineer has to correct its mistakes, reinforce the right matches, and repeat that cycle across the entire knowledge base.

Of course, there are countless ways to phrase a single question, and most bots hold hundreds of intents. Reviewing and retraining across all of them takes time and often feels like you’re doing the same work on a loop.

Machine learning is responsible for major developments in speech recognition and image classification. But for intent-based NLP, it comes with some blockers. You trade transparency for statistical guessing, and you spend a lot of effort shaping the model into something predictable.

That’s why some teams question whether machine learning techniques are the right fit for NLP. In many cases, the operational cost of maintaining the model outweighs the benefit, especially when a more deterministic approach could provide clearer reasoning and less money spent.

Key differences between symbolic AI and machine learning in NLP tasks

Symbolic AI and machine learning take two very different paths to solving problems. Symbolic systems rely on clear rules. Machine learning systems rely on patterns discovered from data. That single difference shapes how each approach behaves in practice.

Symbolic AI works with human-readable symbols and logic. Every rule is explicit, and every decision can be traced back to a specific piece of reasoning. When people describe symbolic systems as “transparent,” they mean you can open them up and see exactly why an answer was produced.

Machine learning models operate differently. They analyze large datasets, identify correlations, and build internal representations on their own. Instead of following predefined logic, they learn from experience. This makes them well-suited for messy, unstructured inputs like images, audio, or free-form text: places where writing rules by hand would be unrealistic.

Knowledge representation also diverges. In symbolic AI, domain experts encode what they know directly into the system. A medical AI, for example, reflects the reasoning of the physicians who wrote its rules. In machine learning, the model builds its own understanding by processing examples. It may find useful patterns that even experts wouldn’t think to try.

Adaptability is another point of contrast. Symbolic AI is strong when the rules of the problem are stable and clearly defined. It falters when something unexpected appears outside the rules set. Machine learning requires more effort upfront: large datasets, careful data training, but once trained, it can generalize to new situations and improve as more data arrives.

More recently, teams have started blending the two. Hybrid systems aim to keep the clarity of symbolic reasoning while adding the flexibility of machine learning. It’s a practical direction for real-world applications that need both accuracy and explainability.

Applications of symbolic AI

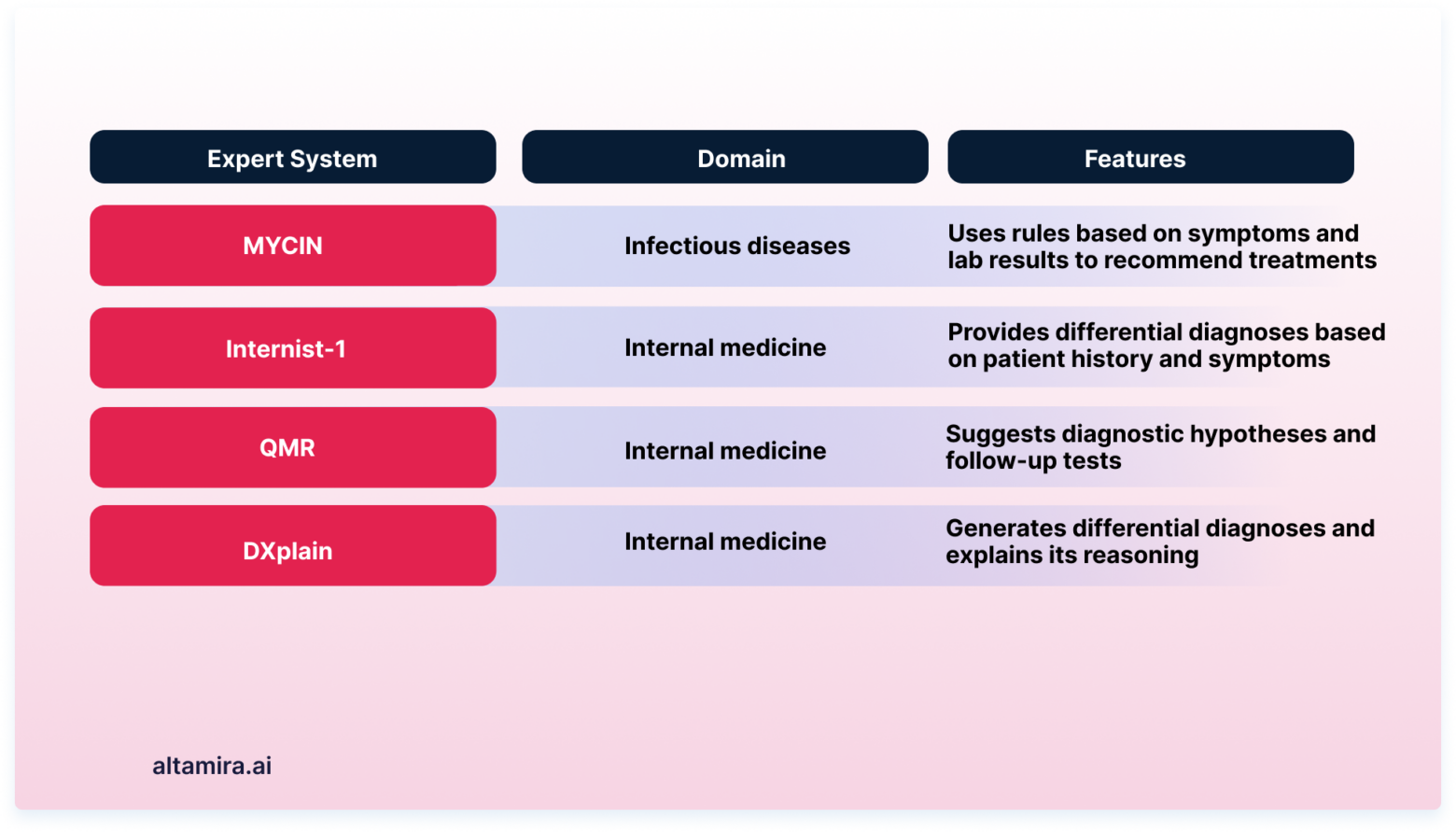

Symbolic AI is the strongest in domains where the rules are clear and the knowledge can be written down. Instead of learning from statistical patterns, these systems capture human expertise directly. That makes them dependable in environments where guesswork isn’t acceptable.

That's why healthcare is one of the best examples. Early expert systems were built entirely on symbolic logic, and many of them still influence medical software today. They worked from large rule sets describing symptoms, tests, diagnoses, and treatment paths, and they showed doctors exactly how each conclusion was reached.

Financial systems also rely on symbolic AI when the stakes are high. Fraud detection rules, for example, can be written to flag specific patterns, e.g., unusual spending, geographic inconsistencies, or timing anomalies. These decisions must be defensible, especially when money is frozen or accounts are locked.

The same applies to compliance-heavy tasks. Lease agreements, tax rules, and asset classifications often follow strict logic. Machine learning models tend to approximate these patterns and symbolic systems follow them exactly.

Manufacturing uses symbolic AI for scheduling and operations planning. Production lines come with hundreds of limitations, such as equipment limits, staffing, maintenance windows, and safety requirements. Rule-based systems handle these constraints directly, which helps prevent costly downtime or sequencing errors. Pharmaceutical quality control follows a similar pattern: strict requirements and no room for ambiguity.

As one operations lead noted, when contracts include exceptions or need to meet specific compliance standards, symbolic AI performs well because it checks every clause the same way every time.

Applications of machine learning

Machine learning performs best in areas where the patterns are too complex or too numerous for people to map by hand. With enough data, these systems can pick out details that would otherwise go unnoticed.

Image recognition is a classic example. Modern models identify objects, faces, and scene details with accuracy that rivals trained specialists. They do this by learning directly from millions of labeled images, not from a preset list of rules.

In language tasks, machine learning powers translation tools, virtual assistants, and search systems. These models work through billions of text samples to understand phrasing, tone, and context. That’s what makes everyday interactions feel smooth.

Personalized recommendations are another area where machine learning stands out. These systems track browsing patterns, purchase history, and engagement signals, then use those insights to predict what a user might want next. The business impact is well documented:

-

31% of eCommerce and retail revenue comes from recommendations

-

35% of Amazon’s sales are driven by its recommendation engine

-

49% of customers have bought something they didn’t plan to because of a recommendation

-

Würth UK recorded a 1303% ROI after adding recommendation features

-

Natural Baby Showersaw a 21% increase in average order value and a 31% boost in basket size

These examples illustrate a core strength of machine learning: it adapts. As more data comes in, the model refines its predictions. It notices new habits, shifts in demand, and subtle changes in user behavior, all without someone rewriting the rules.

That ability to keep learning is what makes machine learning effective across many domains, from fraud detection to supply chain forecasting. The system improves with experience, and that improvement compounds over time.

The final word

Symbolic AI and machine learning don’t need to compete. Each brings strengths the other doesn’t, and many real-world problems benefit from both. When you combine rule-based reasoning with models that learn from data, you get systems that are easier to understand and better at scale.

For example,education tools that adjust to a student’s progress while still following clear instructional rules. Environmental systems that blend expert knowledge with data-driven forecasts. In these cases, neither approach works as well on its own.

Blending the two offers a simple promise: more reliable decisions and fewer blind spots. It’s a way to build AI that stays flexible without losing accountability, and that balance is what many fields need next.