Not long ago, getting a computer to understand human language was a stretch goal in AI. Models could spot patterns, but not meaning. With transformers, everything changed.

First introduced by Google researchers in 2017, the transformer architecture became the backbone of today’s most advanced AI systems. Within a few years, it powered models like BERT, GPT, and DALL·E - engines that now write, translate, summarize, and even create images.

Here’s a scale check: GPT-4, a transformer-based model, holds over a trillion parameters. That’s more than a thousand times larger than the biggest models from a decade ago. This exponential growth isn’t just about size, it’s about capability.

Transformers enabled machines to grasp context, remember long sequences, and generate responses that feel almost human.

What makes transformers remarkable isn’t just their accuracy, but their flexibility. The same architecture that interprets text can analyze DNA, predict protein structures, or compose music.

Researchers now use transformers across industries, from mapping the human genome to detecting financial fraud, because they handle patterns in any sequential data.

In this article, we’ll explore what transformer models are, how they work, and why they’ve become the foundation of modern AI. You’ll see how attention, context, and parallelization turned a single idea into the driving force behind today’s intelligent systems.

What are transformers in artificial intelligence?

Transformers are a type of neural network that learns how different parts of a sequence relate to each other.

Instead of processing data one piece at a time, transformers look at the whole sequence at once, understanding context, order, and meaning together.

Take a simple question like “What is the color of the sky?”

A transformer can see that color and sky connect, and blue is the likely answer. That understanding comes from how it maps relationships between words inside a sentence.

This same approach powers much more complex tasks.

Companies use transformers to:

Turn speech into text

Translate languages

Analyze protein sequences

Summarize long documents

In short, transformers understand how each piece fits within the bigger picture.

https://www.youtube.com/watch?v=JZLZQVmfGn8

Why are transformers important?

Early language models could predict what came next in a sentence, but only by looking one word at a time.

They worked a bit like your phone’s autocomplete: guessing the next word based on what usually follows. It was enough for short phrases, but not for real understanding.

Imagine trying to write a paragraph where the first and last sentences connect — “I am from Italy. I like horse riding. I speak Italian.”

Older models would lose the link between Italy and Italian halfway through. They couldn’t hold onto meaning over long stretches of text.

With transformers, this pattern changed.

They process entire sequences at once, keeping track of how every word relates to every other. That means they can remember context across full documents, not just short phrases.

This shift reshaped natural language processing, allowing systems to translate languages, summarize long articles, write code, and even reason through complex prompts.

In short, transformers made it possible for machines to understand context, not just count words.

Transformers made large-scale AI possible

By processing entire sequences at once, not step by step, they take advantage of parallel computation. That makes training and inference much faster, which is how massive models like GPT and BERT became feasible.

These models hold billions of parameters, learning patterns across language, reasoning, and knowledge. They’re not just better at specific tasks; they’re moving AI closer to systems that can generalize across many.

Transformers also made customization simpler

Through techniques like transfer learning and retrieval-augmented generation (RAG), you can adapt a pretrained model to your data or industry without starting from scratch.

A model trained on the open web can then be fine-tuned for healthcare, finance, or education using much smaller datasets. That change opened access to advanced AI, even for teams without massive computing budgets.

And they go beyond text

Transformers can handle multiple data types at once, such as text, images, audio, making multimodal AI a reality. That’s how tools like DALL·E generate images from written prompts, blending language and vision into a single model.

The impact extends beyond research papers. Transformers redefined how AI is built, deployed, and used, powering tools that understand, create, and assist across industries.

They turned AI from a collection of narrow systems into something that feels much closer to how humans think and connect information.

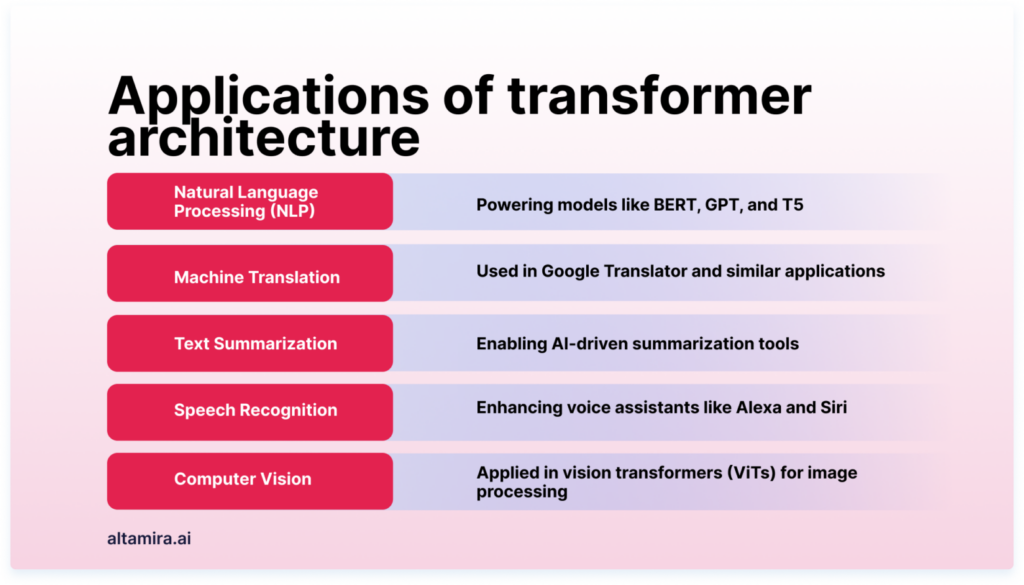

What are the use cases for transformers?

Transformers aren’t limited to text since they work with any kind of sequential data: language, music, code, even DNA. That’s what makes them so powerful and flexible. Here are some of the ways they’re used today.

Natural language processing

Transformers help machines understand and generate language that sounds natural. They can summarize long documents, draft consistent text, or respond to questions in context.

Voice assistants like Alexa rely on transformers to interpret and answer spoken commands accurately.

Machine translation

Translating between languages used to be mechanical and error-prone. Transformers changed that by analyzing full sentences at once instead of word by word. That’s why modern translation tools now produce smooth, context-aware results in real time.

DNA sequence analysis

Think of DNA as a language made of four letters — A, T, C, and G. Transformers can read that “language” and spot relationships between sequences. This helps scientists predict how mutations might affect health and tailor treatments to individuals.

Protein structure prediction

Proteins are long chains of amino acids that fold into complex shapes. Transformers can model these sequences and predict how they’ll fold, giving researchers insights for drug discovery and disease understanding.

In short, transformers reshaped how AI learns from data. They’ve redefined natural language processing and are now driving progress in biology, medicine, and beyond.

What is a transformer model?

A transformer model is a type of neural network built to understand sequences, like the order of words in a sentence or steps in a process.

What makes it special is how it pays attention to relationships within that sequence. Using a mechanism called self-attention, it can connect ideas that appear far apart, for example, linking a noun at the start of a sentence with a verb near the end.

Researchers at Stanford called transformers foundation models — a fitting term, since they now serve as the base for nearly every major advance in modern artificial intelligence.

What can transformer models do?

Transformer models are behind many of the tools you already use every day.

They can translate text or speech almost instantly, turning live meetings or classrooms into inclusive spaces where language and accessibility barriers fade.

In science, transformers help researchers understand DNA and protein sequences, revealing insights that speed up drug discovery and improve treatment design.

In business, they spot trends and anomalies across huge datasets, flagging potential fraud, optimizing factory performance, and improving patient outcomes in healthcare.

And on the web? Every time you search on Google or Bing, a transformer model is working in the background, interpreting your intent, ranking results, and predicting what you might need next.

In short, transformers are no longer experimental. They’re the quiet engine driving the systems you rely on every day.

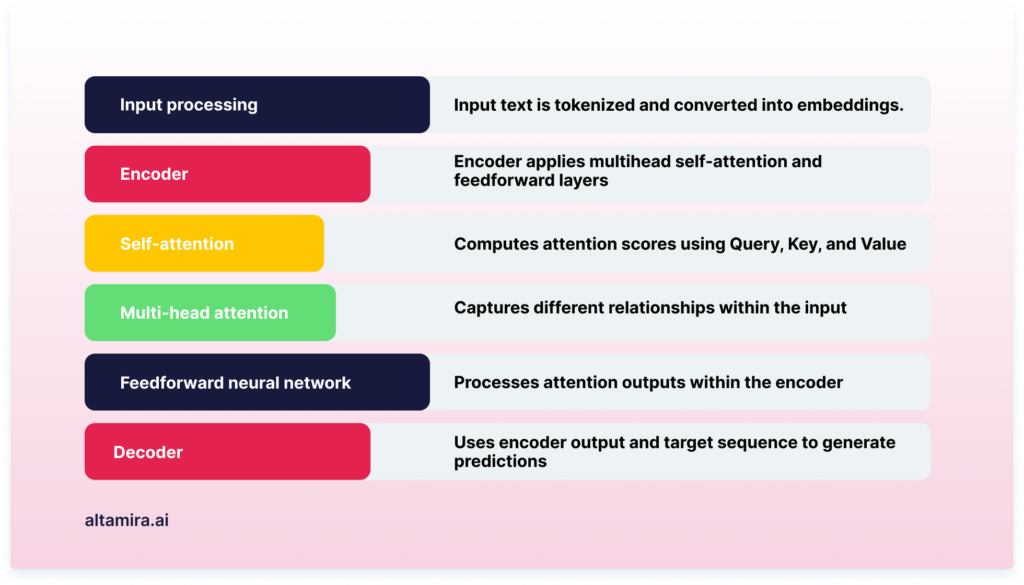

The transformer architecture

The transformer’s power comes from how it’s built. Its architecture is designed to understand relationships between words, not just their order, and to do it all in parallel. Here’s how the main pieces work together.

Encoder

The encoder takes in the input sequence and turns each token — each word or symbol — into a numerical representation.

Unlike older models that process tokens one by one, the encoder looks at how every token relates to every other.

It does this through several stacked layers (usually six), gradually refining its understanding of context and meaning.

The result is a set of vectors, compact representations of what each word means in relation to the whole sentence.

Decoder

The decoder uses those encoded representations to generate the output. It has a similar layered structure but adds one crucial rule: it can’t “peek” ahead.

Each word it predicts must be based only on what’s already been generated. Through self-attention and feed-forward networks, the decoder shapes these insights into fluent, coherent sentences - one word at a time.

Self-attention mechanism

Self-attention is the transformer’s secret sauce. It lets the model decide which words in a sentence are most relevant to each other. When processing “The cat sat on the mat,” the model can link cat and sat directly, no matter how far apart they are.

This mechanism helps the network capture meaning across the full context, not just nearby words.

Multi-head attention

Rather than relying on a single perspective, transformers use multiple “attention heads.” Each head looks at different relationships — one might focus on grammar, another on tone or subject.

The model combines these viewpoints into richer, more accurate representations, improving how it understands and generates language.

Positional encoding

Because transformers process all tokens at once, they need a way to know where each token sits in a sequence. Positional encodings — patterns based on sine and cosine functions — provide that structure.

They tell the model which word came first, second, and so on, preserving order without step-by-step processing.

Together, these components let transformers understand language with remarkable precision — context, meaning, and sequence, all at once.

Advantages of transformer models

Transformer models changed how machines learn from language and why they’re now the foundation of modern AI. Here’s what makes them stand out.

Parallelization

Earlier models like RNNs and LSTMs handled data one step at a time, which slowed everything down.

Transformers process entire sequences at once, using self-attention to look at every word in parallel.

This makes training and inference dramatically faster and allows GPUs and TPUs to run at full capacity.

Understanding long-range context

Traditional models often forgot what came earlier in a long sentence.

Transformers fixed that. Each word can now interact with every other word in the sequence, keeping meaning intact from start to finish. That’s how they capture nuance, tone, and context across paragraphs — not just phrases.

Scalability

Transformers scale easily. You can train small models for focused tasks or expand them into massive systems with billions of parameters.

That flexibility explains the rise of large language models like GPT and BERT, which all build on the same core design.

Transfer learning

Once pretrained on huge datasets, transformers can be fine-tuned for specific industries or tasks with minimal extra data.

This reuse of learned patterns speeds up development and lowers costs, making high-performing AI accessible even to smaller teams.

Stable learning (No vanishing gradients)

Deep neural networks used to struggle when training layers got too deep. Transformers avoid that problem by passing attention signals directly across layers, preserving information from the beginning of a sequence to the end.

Interpretability

Because transformers show which words the model “pays attention” to, you can see why it made a decision.

If a model identifies a review as negative, for instance, it can reveal exactly which phrases led to that call.

State-of-the-art results

From translation to summarization to sentiment analysis, transformers consistently outperform earlier models.

Their ability to learn from massive datasets and understand language in context has set a new benchmark across AI applications.

The attention advantage

Attention is what gives transformers their edge. They weigh meaning dynamically, adjusting focus as context changes.

That’s what allows them to read, reason, and respond like they actually understand what’s being said.

The final words

Transformers completely reshaped how AI understands and works with sequential data. Their architecture introduced a faster and smarter way to train and scale deep learning models, turning once-experimental ideas into systems that now power everyday tools.

As research evolves, transformers will drive use case sophistication much further, from language and vision to science and automation.