Image recognition is a cutting-edge technology that is actively used by businesses.

Education, insurance, healthcare, manufacturing, agriculture, and even security companies already rely on image recognition systems and benefit from using them on a daily basis. Just check out our blog post dedicated to the most interesting use cases of image recognition, and you will be quite impressed and surprised.

Here at Altamira, we have software development experts who have already worked on image recognition apps and created a proof of concept for the insurance business. And today, one of those specialists would like to share his expertise and knowledge with you.

Max Galaktionov is a valuable member of our tech team with more than 15 years of development experience. He will talk about image recognition in a nutshell and explain some practical aspects of training such smart systems.

Max will also tell us how image recognition can be used for car damage detection. So, without further ado, let's proceed to this very interesting topic right away.

The basics of computer vision: an overview

To understand the essence of image recognition, it is necessary to start with the definition of computer vision and its principles of work. Basically, computer vision is a scientific and engineering task that allows computers to get a high-level understanding of image or video content.

The common tasks within computer vision include many processes such as preparing, processing, analysing and gathering complex data in the form of numerical predictions of what is depicted on visual content.

For example, computer vision is already actively used in the manufacturing industry to detect defects in equipment, perform visual search, and preserve high packaging standards.

Computer Vision goals include, but are not limited to, object detection, image recognition, image classification, learning, and further event detection. In this particular article, we'd like to focus more on the image recognition model, its specifics, and use cases.

We will also provide some tips on how to train image recognition.

How AI image recognition works

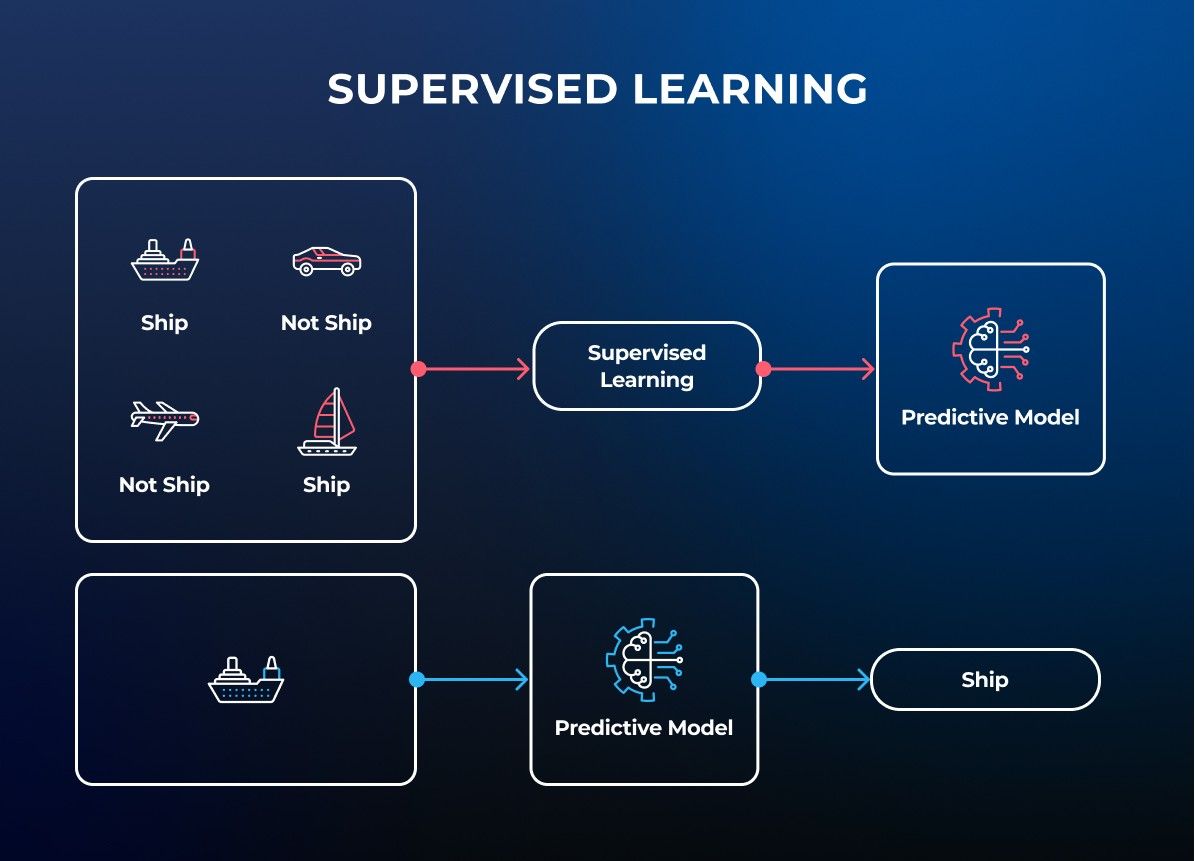

The basic principle underlying the vast majority of image recognition solutions is deep convolutional neural networks (CNNs). A convolutional neural network is a multilayered neural network built to detect complex features, similarities and patterns inside the data.

The first layer of the image recognition models is the encoder. The encoder is responsible for statistical pattern analysis on pixels of images that correspond to the classes (or labels) they're attempting to predict.

The data from the encoder then goes to connected or dense layers that output confidence scores for each label. Image recognition models output confidence scores for each class and image.

The label is assigned for the image and object with the highest level of confidence, and if the confidence score is higher than a particular threshold. In the case of multi-class recognition, several labels can be assigned with a certain precision.

With the high probable precision of 95%, a convolutional neural network is very sensitive to datasets that have to be huge and require lots of resources to be trained.

Image classification task

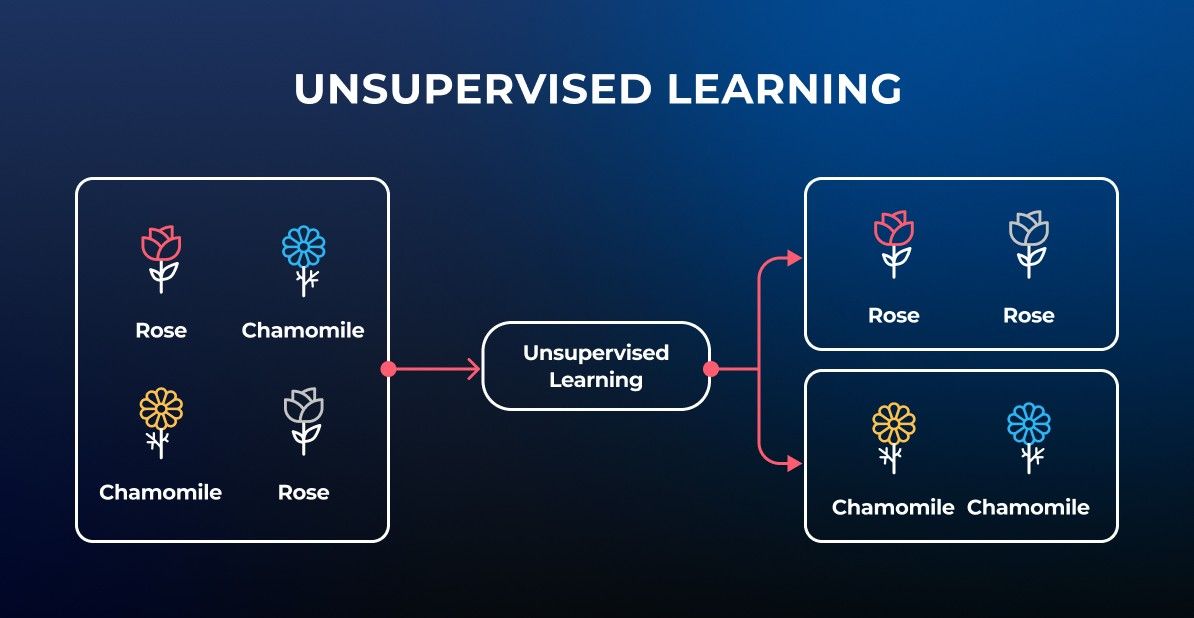

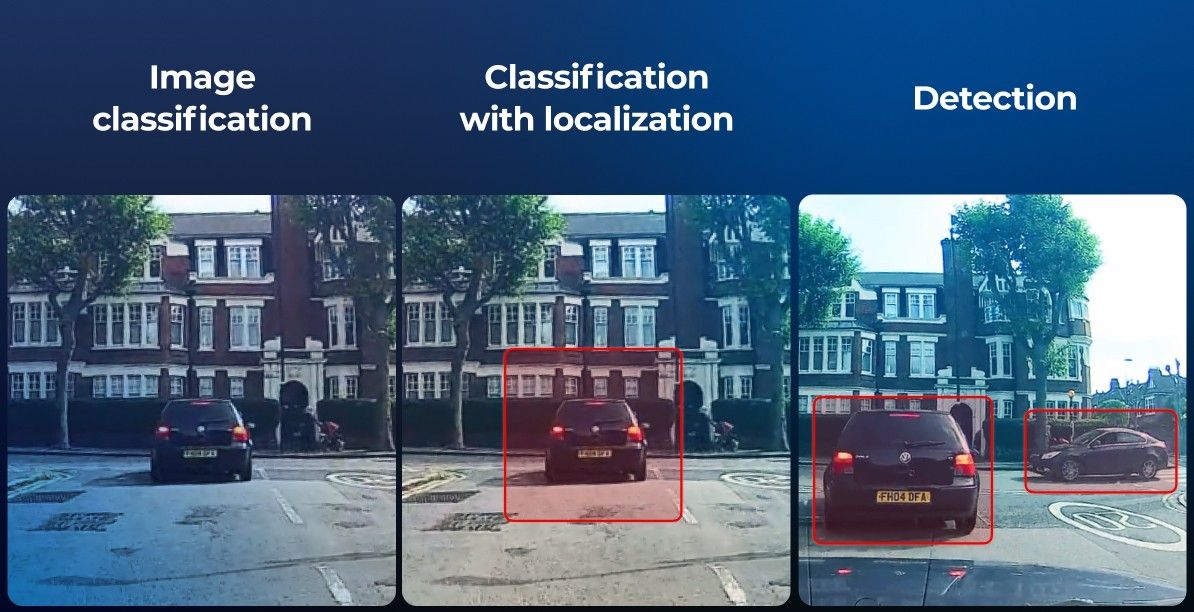

As we've mentioned earlier, image recognition is a computer vision task that tends to identify targeted objects in an image or video, and then perform image classification, object detection and labelling to a certain category.

The list of object categories has to be classified into so-called targeted classes.

In addition to labelling objects, a confidence score represented in a numeric or percentage format is considered another crucial metric.

Now, let's delve into some practical aspects and explore how image recognition algorithms are trained.

Practical aspects of image recognition

Building great image recognition software for business is not enough, since such a solution will require proper training. After all, to benefit from an image recognition application, you need it to be able to analyse an entire image, identify to what category an image belongs, and then perform the right image classification, pattern recognition or object detection.

And that's exactly what proper model training helps to achieve. Let's talk about it right now and see how it works.

Dataset organization

Everything starts with preparing a quality dataset for training. Such a dataset has to contain pre-labelled images.

To increase the precision of the algorithm, the dataset should be as huge as possible and contain up to millions, or even billions, units for each class that needs to be classified.

Also, a separate dataset should be prepared for further testing and tuning procedures. Such datasets are usually smaller and contain from 10% to 33% capacity of the main dataset's capacity.

Image recognition model training

As it will be too complex for convolutional neural networks to understand the whole image as a matrix of pixels and analyse it, each image should pass through a set of filters.

Then the image data will be transferred to convolutional neural networks in the form of feature maps. The layers of the model can analyse features of the feature maps with high precision and a deep hierarchy.

All feature maps (or sections) are normalised, usually using Rectified Linear Units or ReLU, to increase the speed and effectiveness of final model learning.

Model tuning (or fine-tuning)

Fine-tuning is a process of analysis and choosing perfectly fitting parameters of model training. In general, that means that we rebuild our model (in the form of a layered network) by learning it.

The layering happens at a slow rate by randomly reordering layers within the model, further increasing the efficiency of the model. This is achieved by building better internal mapping of layers.

Model tuning is an important process that greatly increases the accuracy of the trained model.

It is highly recommended to do tuning on each iteration of model training, though it's important to remember this is an expensive process by resources and time.

Model testing

Testing of the image recognition model is performed on the validation data set, which is used in separate iterations of training and randomised afterwards.

It can be provided automatically, and the result has to be compared to a threshold or desired accuracy.

In case the result is unsatisfactory, the model can be retrained with a smaller set of parameters. There probably will be more iterations and a lower learning rate to increase the model's interaction with the data.

Also, the cross-validation can be used. It implies splitting the main and validation datasets for the smaller chunks and using them repeatedly. In case of cross-validation, the test dataset must be held separately.

Quality Improvement

Once the model is properly trained, you can proceed with its quality improvement. To improve the quality of a certain image recognition model, it is recommended to follow these three key steps.

#1 Increase the size of the dataset

Convolutional neural networks are sensitive to training data set sizes. So to significantly increase the prediction accuracy, your image dataset has to reach a huge size of millions of images per classification label.

#2 Perform data augmentation

This is an approach that allows for increasing image recognition accuracy with datasets not big enough, and to achieve the desired numbers. Data augmentation implies insignificant changes of samples.

For example, you can modify samples by random transformations – mirror image, change angle, make it grayscale, etc.

These transformations allow for increasing the dataset size in a very simple and yet effective way, and to improve the training process.

#3 Do cross-validation (k-Fold)

This is a highly effective method that involves repeatedly splitting the dataset into a training set and a validation set with a coefficient (k).

The model is being learned with a training set and tested with a validation set. And then the model is saved. Once it is done, another validation set is selected and the model retrained again, unless all iterations are finished.

The final score will include an average of all iterations. Although cross-validation is a great method, we do not recommend using it for tasks with huge numbers of classes.

The thing is that in this particular case, the model will not be able to learn effectively.

How image recognition is used by businesses

Thanks to its similarity to how the human brain and vision work, image recognition became a must-have for many businesses. It allowed for the automation of a lot of processes where human labour was always used.

Speaking about business industries that benefited the most from computer vision tasks and image recognition solutions, there are the following.

Car damage recognition software

The Altamira team has already implemented image recognition to help insurance businesses with the detection of car damage.

Thanks to a deep neural network, our proof of concept and future smart solution can easily detect what kind of damage is present on a car, and then help insurance agents to decide what kind of compensation should be issued for every car crash case.

And now let's take a sneak peek at how everything works.

Our solution for car damage recognition is a set of algorithms and trained AI models that can analyse an image or set of images and understand if the car was damaged, how severely and in what particular place.

This is achieved by a set of gates specialising in certain tasks, increasing the overall precision and effectiveness of the whole solution.

Every gate works on top of a special model trained on a dataset that contains a number of classes to label images of the car by the narrowest parameters to be analysed.

For example, the second dataset was trained with five levels of damaged cars to achieve the best precision of damage level detection.

After passing all gates, the most accurate score of predictions is output to the person who uploaded the image. This allows that person to understand if the car was damaged and proceed with the insurance case.

To conclude

It is hard to underestimate the importance and power of image recognition. By introducing it and building image recognition apps for business, you can cut costs, unload your employees, reduce the possibility of human errors, and even increase the accuracy and efficiency of your work.

For many industries, such as insurance, image recognition has become an irreplaceable helper.

If you invest in your own car damage recognition system and keep training and improving it, you can take your company to a whole new level. Different kinds of car damage will be detected within seconds, and all the insurance cases will be solved way faster as well.

Altamira has experienced developers and quality assurance specialists who can help you with image recognition app development.

Whatever idea you have in mind, we can turn it into a proof of concept and then transform it into a real project that will improve and amplify your business.

FAQ

What tasks can be performed by image recognition?There are many of them, but the most popular tasks that businesses benefit from would be: facial recognition that is used for security purposes, image classification and segmentation empowering robotics and self-driving vehicles, scene and object character identification, and object recognition used mainly for visual search.

What technologies are used for image recognition systems?Many of these solutions are built using the Python programming language and advanced technologies such as artificial intelligence and machine learning.

For deep learning models, programmers use deep-learning APIs like Keras or others. As to AI and machine learning, they help software engineers to train the system, increase its accuracy, and ensure better performance.

What businesses already use image recognition?Speaking about business giants, we would name LinkedIn, Pinterest, Google, Sephora, Salesforce, and many more. Each year, more and more companies invest in image recognition solutions.

And according to statistics, the market of such apps is expected to increase by 20,7% during the upcoming 5 years. So now is the time to think about implementing image recognition for your business.

How to train an image dataset?Training an image dataset means preparing your images so a machine learning model can learn from them. First, label your images. Each one needs a clear tag (like “cat,” “dog,” or “car”). Tools like LabelImg or CVAT can help with that. Then, split your dataset into training, validation, and test sets—usually around 70/20/10.

Once that’s done, you can feed the images into a model using a framework like TensorFlow or PyTorch. You’ll also need to define the model architecture (e.g., a CNN), choose a loss function, and tune parameters like batch size and learning rate. It’s not just about the data—your choices during training matter just as much.

How long does it take to train an image recognition model?Training time varies based on three things: dataset size, model complexity, and available hardware. A basic model with a few thousand labelled images might train in under an hour on a modern GPU. Larger datasets (tens or hundreds of thousands of images) or more complex models can take several hours or even days, especially if you’re training on a CPU.

Using transfer learning (starting from a pre-trained model) can speed things up significantly without sacrificing accuracy.

How do you train a model for image detection?Image detection requires more than just labels; it also needs bounding boxes around objects. This means your dataset should include coordinates along with class labels.

Training usually involves models like YOLO, SSD, or Faster R-CNN. You’ll follow a process similar to image classification: load the dataset, preprocess the data, set up the model, train it on labelled images, and evaluate the results. You’ll also need to monitor for overfitting and adjust things like anchor boxes and IoU thresholds.

How can I improve my image recognition model?Improving your model usually comes down to three areas: data quality, model design, and training strategy.

Clean and balance your dataset. Double-check for mislabeled or low-quality images. If one class has way more samples than the others, either balance it out or apply class weighting during training.

Use data augmentation techniques. Slight changes like rotation, flipping, zooming, or brightness adjustment help the model generalise better, especially if you don’t have a huge dataset.

Try transfer learning. Start with a pre-trained model like ResNet or MobileNet and fine-tune it on your dataset. This gives you a solid baseline with far less training time.

Adjust your model architecture. Sometimes a smaller model with good regularisation outperforms a large one prone to overfitting. Don’t just add layers; test variations systematically.

Monitor your metrics, not just accuracy. Look at precision, recall, and confusion matrices, especially if your data is imbalanced. They’ll tell you where the model is going wrong.

Tune your training settings. Experiment with batch size, learning rate, and regularisation. Small adjustments here can make a noticeable difference.