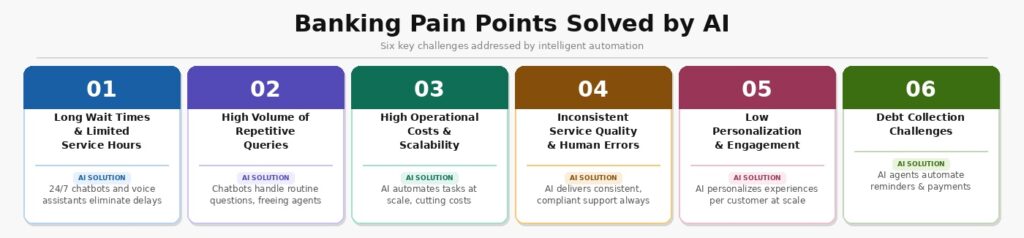

UK banks and insurers are under pressure from every direction. Customers expect answers in seconds, regulators expect a paper trail for every decision, and leadership expects costs to fall. Traditional contact center models, large headcounts, long queues, and inconsistent handling cannot satisfy all three demands at once.

That is why AI in customer service has moved from a speculative investment to a practical priority. This playbook explains what AI can realistically do for regulated UK financial services teams, which workflows are worth automating first, where human judgment remains non-negotiable, and how to build the governance foundations that make it all defensible.What customer service teams need from AI

The requirements for AI in a regulated financial services environment are more specific than those in retail or telecoms. Speed matters, but it cannot come at the cost of accuracy or audit trail. Cost reduction matters, but not if it creates mis-selling or a risk of complaints.

What operations teams in UK lending and insurance actually need from AI:

- Faster first response without increasing headcount. Customers contacting a lender or insurer about an existing product expect to be acknowledged and, where possible, answered promptly. AI can absorb the volume of routine inbound contacts: balance inquiries, payment confirmations, policy status updates, so that human agents spend their time on interactions that require real judgment.

- Consistent, compliant outputs. AI chatbots should apply the same policy, tone, and regulatory safeguards every time. Human agents vary. AI, when configured correctly, does not.

- A clear record of what happened. In a regulated environment, every AI-generated output - whether it is a suggested response, a call summary, or an automated action, needs to be logged in a way that supports audit and oversight. This is not optional.

- Escalation that works. AI should know its limits. When a query touches vulnerability, hardship, complaint, or a situation that falls outside its training, the system needs to surface it for human review with full context, not drop it.

The mistake many institutions make is treating AI as a replacement for process design. The technology does not solve a poorly structured contact center. It amplifies what is already there, so the starting point has to be a clear picture of what good service looks like and where the current gaps are.

Which workflows to automate first

Not all customer service tasks carry the same risk or the same volume. The best candidates for early AI process automation are those that are high-frequency, well-defined, and low in regulatory sensitivity. Three workflows stand out as consistent early wins.

Knowledge suggestions

When an agent is handling a live contact, the time spent searching internal systems for the right policy wording, eligibility criteria, or process step is dead time for the customer. AI can surface relevant knowledge articles in real time, based on what the customer has said so far, without the agent having to leave the conversation to search manually.

This is particularly valuable in insurance, where products have complex terms, and the correct answer often depends on specific policy conditions. An AI assistant that surfaces the right section of a policy document: accurately, in context, reduces handling time, and reduces the risk of the agent giving an incorrect answer from memory.

The key technical requirement is that the knowledge base feeding the AI is well-maintained and version-controlled. If the underlying documentation is outdated or inconsistent, the AI will surface the wrong guidance with the same confidence it would surface the right one.

Call summaries

Post-call work is one of the most significant drains on contact center capacity. An agent who spends eight minutes on a call can easily spend another three to five minutes writing notes, categorizing the interaction, and updating the CRM. Multiply that across hundreds of agents and thousands of daily calls, and the productivity cost is material.

AI transcription and summarisation tools can generate a structured call summary in seconds, covering the reason for contact, actions taken, and any follow-up required. The agent reviews and confirms rather than writing from scratch. In practice, this can cut average handling time by 20–30% for post-call work, without changing what happens during the call itself.

For UK lenders and insurers, these summaries also serve a compliance function. A consistent, structured record of every customer interaction, captured automatically, is a stronger audit trail than agent-written notes that vary in detail and format.

After-call actions

Some contact types generate predictable follow-up tasks: send a policy document, update an address, issue a letter of confirmation, flag an account for callback. These are steps that an agent currently completes manually, often after the call has ended, sometimes with a delay.

AI can identify these action triggers in the call transcript and automatically initiate the relevant system updates or communications, subject to human review thresholds set by the business. The agent confirms or overrides rather than executing each step individually. This reduces the gap between a customer being told something will happen and it actually happening, which matters for both customer experience and complaint risk.

The governance requirement here is clear: every automated action needs a log entry, and there must be a defined process for reviewing exceptions. Automation that operates invisibly is a regulatory liability.

Which workflows should stay human-led

AI in customer service works best when it augments human agents, not when it replaces human judgment in situations that require it. Several categories of interaction should remain human-led, with AI providing support at most.

Financial difficulty and vulnerability

When a customer is struggling to make payments, is in financial distress, or shows signs of vulnerability, the interaction requires empathy, flexibility, and real-time judgment. AI can flag potential vulnerability signals from language patterns, which is useful, but the conversation itself should be handled by a trained agent. The FCA's Consumer Duty and its expectations regarding the fair treatment of customers in vulnerable circumstances make this a compliance issue, not just a service-quality preference.

Formal complaints

A complaint under DISP rules requires a defined handling process, a fair and impartial investigation, and a written response within set timeframes. AI can assist with drafting and with identifying relevant precedent, but the determination itself, whether to uphold the complaint and on what basis, must be a human decision with a documented rationale.

Complex product decisions

Customers deciding whether to take out a new mortgage, increase their sum assured, or restructure a loan are making important financial decisions. The advice and information given in these conversations carry regulatory weight. AI-generated information can support the agent, but the agent remains responsible for what the customer is told.

Escalated or high-emotion contacts

When a customer is angry, distressed, or making threats, including threats of harm, the contact requires human presence. AI should detect these signals and route accordingly, but it should not attempt to manage them autonomously.

The practical implication is that AI investment in regulated financial services needs to be accompanied by investment in human capability, not as an alternative to it. The agents handling the interactions that AI cannot manage well need to be better trained and better supported, not fewer in number.

Metrics that matter in regulated support teams

Standard contact center KPIs: average handling time, first contact resolution, CSAT remain relevant, but they are not sufficient on their own for AI deployments in regulated environments. The following metrics provide a more complete picture.

| Metric | What it measures | Why it matters in regulation |

| AI containment rate | % of contacts resolved by AI without human involvement | Indicates automation coverage; high rates need quality validation |

| Escalation accuracy | % of AI-handled contacts correctly identified as needing human review | Failures here create complaints and vulnerability risk |

| Summary accuracy rate | % of AI-generated call summaries confirmed without significant agent edits | Proxy for output quality and audit trail reliability |

| Post-call action error rate | % of automated after-call actions reversed or corrected by agents | Indicates system reliability for process automation |

| Average handling time (AI-assisted vs. baseline) | Time per contact with and without AI support | Demonstrates efficiency gain; supports business case |

| Complaint rate on AI-handled contacts | Complaints arising from contacts where AI played a role | Critical for FCA Consumer Duty compliance monitoring |

| Vulnerable customer identification rate | % of contacts flagged for vulnerability signals by AI | Supports outcomes-based evidence for supervisory review |

The complaint rate on AI-handled contacts deserves particular attention. A system that is fast but generates a higher proportion of complaints is not fit for purpose. This metric needs to be tracked from deployment, not retrospectively.

Check out our AI strategy consulting services.

What data and governance must be in place?

AI in regulated financial services is only as safe as the framework around it. Before deploying any AI-assisted customer service capability, UK lenders and insurers need to address four foundational requirements.

Data quality and accessibility

AI systems require clean, up-to-date, and well-structured data. Customer records that are inconsistent across systems, knowledge bases that have not been updated, and call data that is not properly transcribed will all produce unreliable AI outputs. A data audit should precede any AI deployment, and a maintenance regimen for ongoing data quality should be in place before go-live.

Model explainability

Regulators, including the FCA and the PRA, expect firms to understand how their systems make decisions, particularly when those decisions affect customers. Black-box models are difficult to defend in a supervisory review. Wherever AI influences outcomes, even in an assistive capacity, the firm should be able to explain its logic and demonstrate that it produces fair results.

Human oversight and override

Every AI capability deployed in a customer-facing context should have a defined human review point and a clear override mechanism. This is both a governance requirement and a practical safeguard. AI systems will make errors. So, the question is whether the surrounding process catches them before they reach the customer or affect a customer's account.

Bias and fairness monitoring

AI trained on historical data can replicate historical biases. In lending and insurance, where protected characteristics intersect with eligibility and pricing decisions, this is a material risk. Firms need to monitor AI outputs across customer segments regularly and maintain a process to identify and correct disparate impacts.

The ICO's guidance on AI and data protection, the FCA's principles on model risk, and the Consumer Duty's requirement to demonstrate good outcomes all apply here. Governance is not a box to tick before deployment, it is an ongoing operational discipline.

How Altamira supports customer service AI delivery

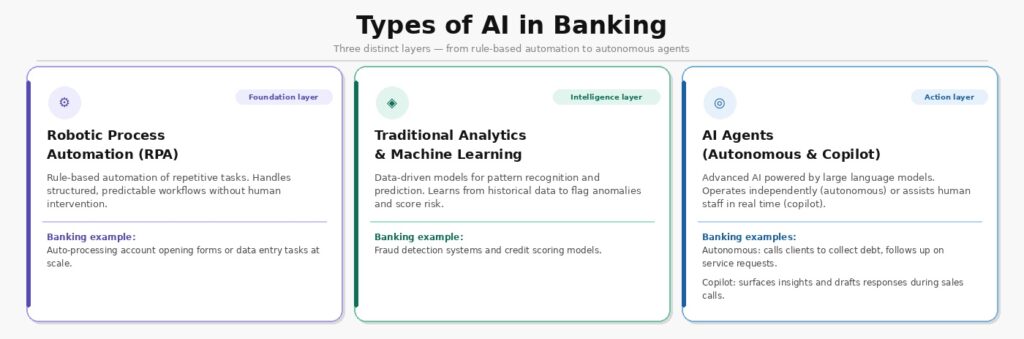

Delivering AI in a regulated financial services environment is a technical and organizational challenge. The technology like large language models is only part of the answer. Altamira works with banks, lenders, and insurers to design and build AI-assisted customer service capabilities that are operationally sound and regulatorily defensible from the outset.

That means starting with a clear picture of the current contact mix - what types of interactions are coming in, how they are currently handled, and where the highest-value automation opportunities lie. It means building AI systems that are integrated into existing contact center infrastructure rather than bolted on alongside it. And it means establishing the governance layer: logging, monitoring, escalation design, model documentation, as a core part of the delivery, not an afterthought.

Altamira's teams include specialists in both AI engineering and financial services operations. That combination matters. An AI system that is technically correct but fails to account for DISP complaint-handling requirements, Consumer Duty expectations, or the operational realities of a regulated contact center will create more problems than it solves.

Whether the starting point is a specific workflow: call summarisation, knowledge assistance, after-call automation, or a broader transformation of the contact center model, Altamira provides the technical capability, the regulatory understanding, and the delivery experience to make it work.

The final word

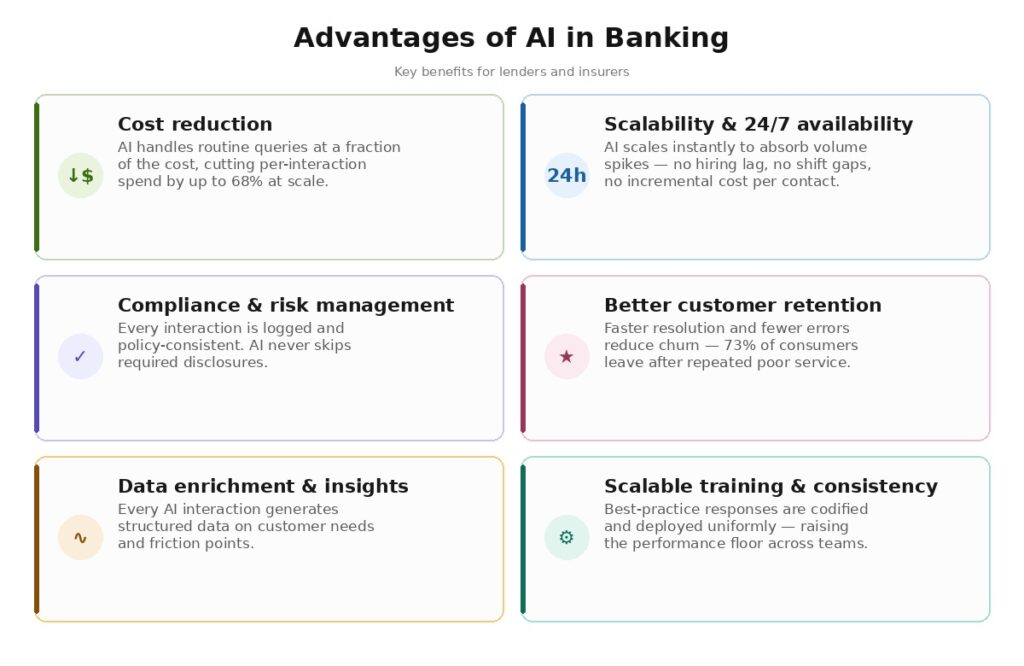

AI in banking and insurance customer service is no longer an experimental proposition. The evidence is there: banks that have deployed AI-assisted contact center tools have cut per-interaction costs significantly, reduced post-call work by meaningful margins, and maintained or improved customer satisfaction scores. The technology works.

What distinguishes successful deployments in regulated environments is not the AI itself, it is the rigor around it. Clear workflow selection, strong data foundations, defined governance, and a genuine commitment to keeping human judgment at the center of high-stakes interactions are what separate institutions that benefit from AI from those that accumulate risk.

For UK lenders and insurers, the practical path forward is clear in principle: start with high-volume, low-risk workflows, instrument them properly from day one, and build outward from there as confidence and capability grow. The institutions that approach this methodically will find that AI makes their contact centers faster, more consistent, and easier to supervise, not a liability, but an asset.

Contact us for more info!

FAQ

How is AI transforming wealth management?

AI is changing wealth management in two broad ways: how advice is delivered and how portfolios are managed.

On the advice side, AI enables firms to personalize recommendations at a scale that was previously impractical. A relationship manager covering hundreds of clients cannot monitor every account daily, but an AI system can — flagging life events, drift from agreed risk profiles, or opportunities based on real-time market conditions and individual client data. This shifts the adviser's role from information gathering to higher-value conversation.

On the portfolio side, AI models are being used for more sophisticated risk analysis, factor exposure monitoring, and scenario modeling. Some firms are also using AI to automate rebalancing within defined parameters, reducing both cost and the lag between a portfolio going off-strategy and corrective action being taken.

In regulated markets like the UK, these capabilities sit within a human oversight framework — the AI informs and executes within set boundaries, but the adviser or investment committee remains accountable for the outcome. The practical result is that firms can serve a broader client base profitably, deliver more consistent service quality across all segments, and free senior advisers to focus on the work that genuinely requires their expertise.

Where can you find AI solutions for wealth management?

AI solutions for wealth management fall into three broad categories, and the right starting point depends on what the firm is trying to solve.

Specialist fintech vendors build tools designed specifically for financial services, covering portfolio analytics, client engagement, document processing, and regulatory reporting. These typically integrate with existing custody, CRM, and planning platforms and come with financial services compliance considerations already built in.

Larger technology providers, including cloud platforms and enterprise software vendors, offer AI infrastructure and tooling that wealth managers can configure for their own use cases. This approach offers more flexibility but requires more internal capability to implement and govern.

What is an AI personalization platform in banking?

An AI personalization platform in banking is a system that uses customer data: transaction history, product holdings, channel behavior, and life stage signals to tailor the interactions, communications, and recommendations each customer receives. Rather than treating everyone in a segment identically, the platform identifies what is relevant to each individual and delivers it at the right moment through the right channel.

In practice, this might mean a mortgage customer receiving proactive information about their rate review before they need to call, a current account holder being offered a savings product when their balance suggests capacity to save, or a claims customer receiving status updates that match their communication preferences without having to follow up.

For UK lenders and insurers, the FCA's Consumer Duty adds further weight to this: firms are expected to demonstrate that communications and products are appropriate for the customer receiving them. A properly governed AI personalization platform can both enable that and provide the evidence to demonstrate it.