The human brain has a unique ability to immediately identify and differentiate items within a visual scene. Take, for example, the ease with which we can tell apart a photograph of a bear from a bicycle in the blink of an eye.

When machines begin to replicate this capability, they approach ever closer to what we consider true artificial intelligence.

AI models for image recognition are built on Computer vision. It aims to emulate human visual processing ability, and it's a field where we've seen considerable breakthrough that pushes the envelope.

Today's machines can recognise diverse images, pinpoint objects and facial features, and even generate pictures of people who've never existed.

It's hard to believe, right? In this regard, image recognition technology opens the door to more complex discoveries.

Let's explore the list of AI image recognition models and other ML algorithms, highlighting their capabilities and the various applications they are used for.

One can't agree less that people are flooding apps, social media, and websites with a deluge of image data.

For example, over 50 billion images have been uploaded to Instagram since its launch. This explosion of digital content provides a treasure trove for all industries looking to improve and innovate their services.

Image recognition technology enables computers to pinpoint objects, individuals, landmarks, and other elements within pictures. This niche within computer vision specialises in detecting patterns and consistencies across visual data, interpreting pixel configurations in images to categorise them accordingly. Prof. Fei Fei Li, a computer vision expert from World Labs, has shared her knowledge on how image recognition works.

https://youtu.be/40riCqvRoMs?si=HzwpThc2tfA30lwf

Given that this data is highly complex, it is translated into numerical and symbolic forms, ultimately informing decision-making processes. Every AI model image recognition is trained and converged, so the training accuracy needs to be guaranteed.

How convolutional neural networks (CNNs) in image recognition work

CNNs are deep neural networks that process structured array data such as images. CNNs are designed to adaptively learn spatial hierarchies of features from input images.

During training, a CNN learns the values of the filters and weights through a backpropagation algorithm, adjusting them to recognise patterns and features in images, such as edges, textures, or object parts, which then contribute to recognising the whole object within the image.

By stacking multiple convolutional, activation, and pooling layers, CNNs can learn a hierarchy of increasingly complex features.

Lower layers might learn to detect colours and edges, intermediate layers could learn to detect more complex structures like eyes or wheels, and deeper layers can detect high-level features like faces or entire objects, which is critical for image recognition tasks.

Overview of popular image recognition algorithms

A few image recognition algorithms are a cut above the rest. All of them refer to deep learning algorithms. However, their approach toward recognising different classes of objects differs.

- Faster Region-based CNN (Region-based Convolutional Neural Network) is the star in the R-CNN cluster, considered as the best among machine learning models for image classification tasks. It leverages a Region Proposal Network (RPN) to detect features, together with a Fast RCNN, representing a significant improvement compared to the previous image recognition models. Faster RCNN processes images of up to 200ms, while it takes 2 seconds for Fast RCNN. The process time highly depends on the hardware and data complexity.

- Single Shot Detector (SSD) divides the image into default bounding boxes as a grid over different aspect ratios. Then, it merges the feature maps received from processing the image at the different aspect ratios to handle objects of differing sizes. This approach makes SSDs very flexible and easy to train. With this AI model, an image can be processed within 125 ms, depending on the hardware used and the data complexity.

- You Only Look Once (YOLO) processes a frame only once, utilising a set grid size and defines whether a grid box contains an image. To this end, the object detection algorithm uses a confidence metric and multiple bounding boxes within each grid box. The third version of the YOLO model, named YOLOv3, is the most popular. A lightweight version of YOLO called Tiny YOLO processes an image at 4 ms. (Again, it depends on the hardware and the data complexity).

Best image recognition models

Image recognition models use deep learning algorithms to interpret and classify visual data with precision, transforming how machines understand and interact with the visual world around us.

Let's look at the most popular image recognition and image classification models.

Bag of Features model (BoF) overview

The BoF model is a technique used in computer vision to recognise and locate objects within images. It compares a reference image, typically a cropped sample of the object you want to detect, to a target image where the object might be present.

Instead of comparing images pixel by pixel, BoF first breaks both images into a collection of local features. These are distinctive patterns or textures: corners, edges, or blobs, extracted using algorithms such as SIFT or SURF.

Each feature is then encoded as a numeric descriptor that captures its shape and appearance.

Then, BoF treats them similarly to words in a document. It clusters features into groups (known as visual words) and represents the image as a histogram of these visual words, hence the name "Bag of Features," echoing the "Bag of Words" model from text analysis.

To identify whether the object from the sample image exists in the target image, BoF compares their feature histograms and checks for spatial consistency.

If enough visual words align and form a similar spatial pattern, the model concludes that the object has been found.

BoF is particularly useful in scenarios where the object’s appearance might vary slightly due to rotation, scale, or lighting, as it focuses on the occurrence and distribution of local patterns rather than on global pixel similarity.

Viola-Jones algorithm overview

Viola-Jones was one of the first algorithms capable of detecting faces in real-time.

It’s a foundational method in real-time face detection. It works by rapidly scanning an image to identify regions that likely contain a face, using a combination of efficient feature extraction and machine learning-based classification.

At its core, the algorithm extracts Haar-like features, a simple rectangular pattern that captures the image's edge and texture information. These features are computed very quickly using an integral image representation.

Viola-Jones uses a machine learning technique called AdaBoost to determine whether a region contains a face. This method combines many simple “weak” classifiers based on a single Haar feature into a stronger, more accurate ensemble.

Boosted classifiers are trained to distinguish between face and non-face patterns.

The detection process is structured as a cascade of classifiers, where each stage quickly rejects regions that clearly don’t contain faces. Only areas that pass through all cascade stages are considered potential face detections.

This structure allows the algorithm to process images rapidly, focusing computational effort only where it's likely to matter.

Transfer Learning

Transfer learning is a machine learning method where a model developed for a particular task is reused as the starting point for a model on a second task.

It is an effective technique, especially when the first task involves a large and complex dataset and the second task does not have as much data available. Here's how it works and why it is beneficial for image recognition.

Pre-trained models

In transfer learning, a model is initially trained on a large dataset with a wide range of images, like ImageNet, which has millions of images classified into thousands of categories.

This initial model learns many features from the comprehensive dataset, making it a robust feature extractor for image data.

Feature transfer

When the pre-trained model is used for a new task, it can transfer the learned features (weights and biases) to the new task.

Since the initial layers of CNNs often learn to recognise basic shapes and textures, while the later layers learn more specific details, the features in the initial layers are generally useful for many image recognition tasks.

Fine-tuning

The final layers of the model can be fine-tuned with a smaller dataset for a specific task.

Fine-tuning involves retraining these layers to adjust the weights to better suit the specific task at hand, while the earlier layers may remain frozen on the weights learned from the original dataset.

In essence, transfer learning leverages the knowledge gained from a previous task to boost learning in a new but related task. This is particularly useful in image recognition, where collecting and labelling a large dataset can be very resource-intensive.

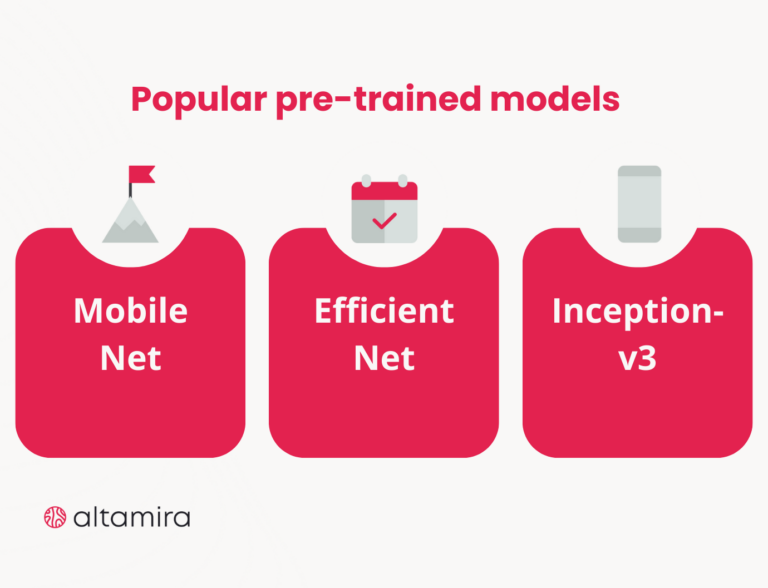

What are the popular pre-trained models?

- MobileNet: Like many models, it was trained using the ImageNet dataset. Nonetheless, its design is tailored to be resource-efficient for mobile and embedded devices without significantly compromising accuracy. Its design renders it perfect for scenarios with computational limitations, such as image recognition on mobile devices, immediate object identification, and augmented reality experiences.

- EfficientNet is a cutting-edge development in CNN designs that tackles the complexity of scaling models. It attains outstanding performance through a systematic scaling of model depth, width, and input resolution, yet stays efficient. Trained on the extensive ImageNet dataset, EfficientNet extracts potent features that lead to its superior capabilities. It is recognised for accuracy and efficiency in tasks like image categorisation, object recognition, and semantic image segmentation.

- Inception-v3, a member of the Inception series of CNN architectures, incorporates multiple inception modules with parallel convolutional layers with varying dimensions. Trained on the expansive ImageNet dataset, Inception-v3 has been thoroughly trained to identify complex visual patterns. The architecture of Inception-v3 is designed to detect an array of feature scales, enabling it to perform various computer vision tasks, including but not limited to image recognition, object localisation, and detailed image categorisation.

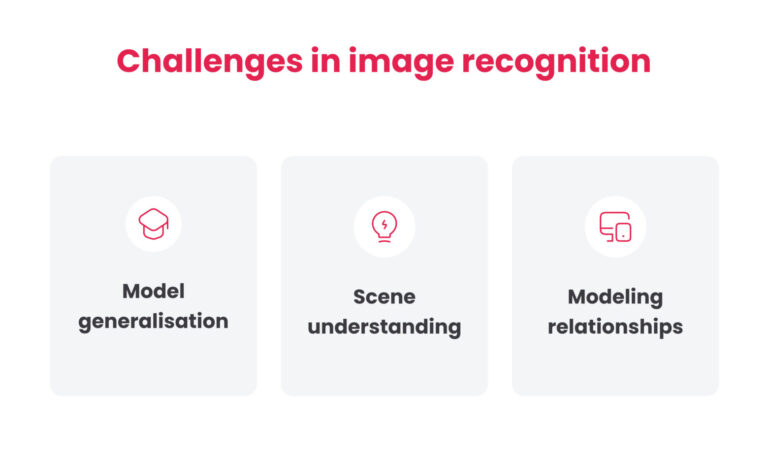

Challenges in image recognition

The rapid progress in image recognition technology is attributed to deep learning, a field that has thrived due to the creation of extensive datasets, the innovation of neural network models, and the discovery of new tech opportunities.

Deep neural networks, engineered for various image recognition applications, have outperformed older approaches that relied on manually designed image features.

Despite these achievements, deep learning in image recognition still faces many challenges that need to be addressed.

Model generalisation

The major challenge lies in model training that adapts to real-world settings not previously seen.

So far, a model is trained and assessed on a dataset that is randomly split into training and test sets, with both the test set and training set having the same data distribution.

Nevertheless, in real-world applications, the test images often come from data distributions that differ from those used in training. The exposure of current models to variations in the data distribution can be a severe deficiency in critical applications.

Scene understanding

Apart from data training, complex scene understanding is an important topic that requires further investigation.

People are able to infer object-to-object relations, object attributes, 3D scene layouts, and build hierarchies besides recognising and locating objects in a scene.

A wider understanding of scenes would foster further interaction, requiring additional knowledge beyond simple object identity and location. This task requires a cognitive understanding of the physical world, which represents a long way from reaching this goal.

Modeling relationships

It is critically important to model the object's relationships and interactions in order to thoroughly understand a scene. Imagine two pictures with a man and a dog.

If one shows the person walking the dog and the other shows the dog barking at the person, what is shown in these images has an entirely different meaning.

Thus, the underlying scene structure extracted through relational modelling can help to compensate when current deep learning methods falter due to limited data. Now, this issue is under research, and there is much room for exploration.

Bottom line

From facial recognition and self-driving cars to medical image analysis, all rely on computer vision to work. At the core of computer vision lies image recognition technology, which empowers machines to identify and understand the content of an image, thereby categorising it accordingly.

AI and the machine learning model for image recognition technology have significantly closed the gap between computer and human visual capabilities, but there is still considerable ground to cover.

The future of image recognition lies in developing more adaptable, context-aware AI models that can learn from limited data and reason about their environment as comprehensively as humans do.

Altamira helps clients understand, identify, and implement an AI or ML model for image recognition that best fits their business.

Also, we provide full-cycle software development so you can build an AI image recognition model from scratch or integrate image recognition technology within the existing software system. Entrust your digital transformation to our expertise!

Wonder how AI capabilities may empower your business? Join our free AI discovery workshop! Contact us to get more information.

FAQ

What are the most effective image recognition AI models?

Some of the most widely used models include Convolutional Neural Networks (CNNs) like ResNet, EfficientNet, and DenseNet.

Vision Transformers (ViT) and hybrid models (like ConvNeXt) are gaining traction for more recent and advanced use cases due to their performance on large datasets. The choice depends on data size, computational resources, and the task complexity.

How to integrate image recognition algorithms into existing systems?

Start by selecting a model that fits your use case and constraints. You can either:

- Use a pre-trained model via APIs (e.g., from AWS Rekognition, Google Vision, or open-source frameworks like PyTorch or TensorFlow),

- Deploy your own model as a microservice and connect it to your system via REST or gRPC.

As part of your integration pipeline, you’ll also need to handle preprocessing (resizing, normalising images) and postprocessing (translating model outputs into actionable results).

What are the key challenges when training an image recognition model for business use?

The most common challenges are:

- Data quality and quantity: Business data is often messy, inconsistent, or limited

- Labelling: High-quality annotations are expensive and time-consuming

- Overfitting: Often occurs with small or domain-specific datasets

- Model drift: The model may degrade over time as real-world data shifts

- Compliance and privacy: Relevant in industries with sensitive data, like healthcare or finance

How can businesses use transfer learning in image recognition projects?

Transfer learning lets you start with a model that’s already trained on a large dataset, then fine-tune it on your specific data. This means:

- You need fewer labelled examples

- Training is faster

- And you usually get better performance out of the gate

Most frameworks support this workflow with just a few lines of code, making it accessible even for small teams.

How to build an image recognition model?

Start by defining what you are trying to identify in the images. Once that’s clear, collect a dataset that reflects the real-world conditions your model will face. Clean and label the images—this step often takes more time than expected.

Next, pick a model architecture. For most use cases, a pre-trained convolutional neural network (CNN) like ResNet or EfficientNet is a practical choice. Fine-tune it using your labelled dataset with a deep learning framework like PyTorch or TensorFlow.

Train the model, validate it with held-out data, and iterate. The key is to monitor performance metrics and make adjustments as needed—data quality, architecture tweaks, or regularisation strategies.

Finally, deploy it in an environment where it can process real images—whether that’s on a server, in the cloud, or on edge devices.

What industries are currently seeing the most impact from image recognition technologies?

Almost every sector uses image recognition algorithms. For example, in retail, image recognition is used for shelf monitoring, visual search, and customer behaviour tracking. Healthcare benefits from image recognition algorithms through medical imaging (e.g., radiology, dermatology) and diagnostics.

Quality control, defect detection, and equipment monitoring are done by image recognition algorithms in manufacturing. One of the most well-known uses comes from security: facial recognition, surveillance, and anomaly detection.

These use cases are not just pilot experiments either, and are already in production.