Table of Contents

Containerization and division into microservices are the most popular tendencies in today’s IT industry. The main purpose of these tendencies is to arrange a project or its functionally isolated part into a self-sufficient image that can be launched on different platforms and in necessary quantities.

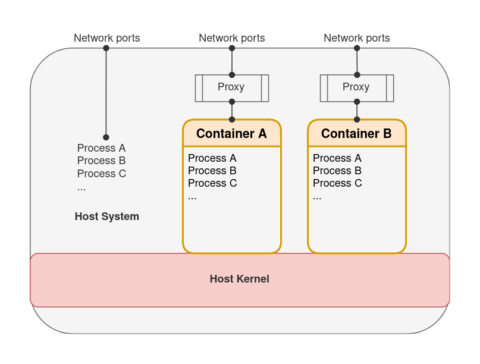

The container itself is so called “system within a system” and it can be launched from a specifically created image. The image includes all components (i.e. programs, libraries, configurations) necessary for opening the container. If you open a container in a host system, then a separate space of names will be created for all processes related to that container. As a result it will not know anything about the host system surrounding it and other containers.

Here is a picture demonstrating how this works in practice. And we are going to explain this scheme in detail.

#1 Containerization

Let’s begin with containerization because that is the first and the most important element of the scheme posted above. First of all, we would like to point out some crucial advantages of containerization:

- Self-sufficiency of the image. The image created in Docker contains all necessary components and can be launched without modifications to any system that supports such kind of containerization. All up-to-date operating systems can work with this technology, so it is very convenient.

- Isolation. You can open simultaneously and on one system several containers that have different surrounding requirements (i.e. the version of the software, configuration structure, etc.).

- Cross-platform support. The container from Linux can be successfully launched on the host system of Windows or MacOS without any issues.

- Distribution. The image can be published for open or authorized access.

All these specificities of containerization make it an irreplaceable tool for the project’s development and testing. Deployment of the projects using the same approach is a logical follow-up to this process.

#2 CI/CD

Continuous Integration (CI) together with the Continuous Delivery (CD) concept is the second element of the scheme we’ve provided in the beginning. In simple terms, this concept means that automated testing should be performed for the code of the whole project each time you make any changes or alterations to it, and frequent deployment is also necessary. This allows you to respond timely to various code issues and incompatibilities of different project components and deploy changes step by step, without accumulating all issues to a critical point.

The CI/CD concept now became a technological standard represented as a part of leading development platforms or as a separate solution. For example, for our work, we’ve chosen GitLab as the most prospective and quickly developing platform. We will get back to it a little bit later and describe some of its possibilities related to the creation, testing, and deployment of projects for cloud-based services.

#3 Cloud-based service

Cloud-based service is the third and the last element of our scheme. In our particular case, we’ve selected Amazon Elastic Container Service (ECS) – the system for launching containers, and Amazon Elastic Container Registry (ECR) – the system of Docker images storage.

Now as to GitLab and our scheme showing how to use it. First of all, it is necessary to mention that GitLab exists in two forms:

- as a SaaS (GitLab.com);

- as a separate platform.

They do not differ in terms of functionality so our scheme can be used for any form. The most important element of GitLab is its CI/CD automation mechanism.

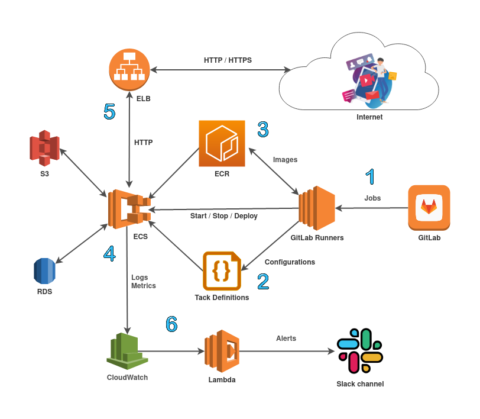

[1] When updating GIT-repository in accordance with the specially created file .gitlab-ci.yml, GitLab launches a series of tasks for that file creation and testing. Those tasks are performed by special GitLab Runners which can be launched anywhere – starting with the working station of the developer and ending with servers the developer specified. GitLab.com provides 2000 minutes of Runners work within its free service package.

After the code testing the creation of the image takes place. This can be called a “local deployment” into the virtually isolated system. The project comes through complete creation, initialisation and configuration. [3] The created image is uploaded to the ECR repository (or any other repository if necessary, GitLab also has an image register feature). That image can be used for the further deployment or acceptance tests.

[4] For the deployment of the containerized project we’ve chosen ECS Fargate – serverless platform for launching Docker images. The deployment itself was divided into two stages. [2] The first stage included the update of configuration (Task DEfinition) with the specification of the new image name. The second stage implied the update of ECS Fargate service in accordance with the new configuration. ECS launches the containers of the new deployment, checks their functionality, translates Elastic Load Balancer (ELB) traffic and after that it disables containers of the previous deployment. [4][5]

The project can be connected to the web independently with the help of IP-address as well as with the help of ELB [5]. In this case the launch and update of the SSL-certificate is performed by AWS automatically and for free.

[6] All protocols of the project (the output of containers) are kept in CloudWatch Logs. Based on these protocols the filters and metrics of CloudWatch are created and they are sending the messages to the Slack channel through a special Lambda-function.

Are there any alternatives?

The provided scheme intentionally does not use all contemporary development automation processes and deployment capabilities (Auto DevOps) of GitLab, because this way the scheme is more controlled and universal. It can be performed even without using GitLab on any other platform with CI/CD like GitHub, GIT+Jenkins and others.

You can also use the native services of Amazon: CodeCommit, CodeBuild, CodeDeploy for the full integration of the development environment in Amazon AWS.